CentOS 7 基于 Kubernetes 1.23 (1主2从) 部署若依项目

本文详细介绍了Kubernetes集群的完整部署流程,包括环境准备、集群初始化、网络插件安装、存储配置等核心步骤。主要内容分为三部分:1)基础环境搭建,包括防火墙、SELinux、Swap关闭等预处理操作;2)集群部署,涵盖Master节点初始化、Node节点加入、Calico网络插件安装;3)应用部署示例,展示了MySQL主从集群、Redis、Prometheus监控系统等组件的配置方法。文章还

第一阶段:环境准备(所有节点执行)

假设你有三台机器:

-

Master: 192.168.19.135

-

Node1: 192.168.19.137

-

Node2: 192.168.19.138

在所有三台机器上执行以下命令:

# 1. 关闭防火墙

systemctl stop firewalld && systemctl disable firewalld

# 2. 关闭 SELinux

setenforce 0

sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config

# 3. 关闭 Swap (K8s 强制要求)

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

# 4. 设置主机名 (举例 Master 节点,其他节点分别设为 node1, node2)

hostnamectl set-hostname master

# (记得在 node1 和 node2 上分别执行对应的命令)

# 5. 添加 hosts 解析

cat >> /etc/hosts << EOF

192.168.19.135 k8s-master

192.168.19.137 k8s-node1

192.168.19.138 k8s-node2

EOF

# 6. 流量桥接设置 (将桥接的 IPv4 流量传递到 iptables 的链)

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system安装docker

# 安装依赖

yum install -y yum-utils device-mapper-persistent-data lvm2

# 添加阿里云 Docker 源

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装 Docker

yum install -y docker-ce docker-ce-cli containerd.io

# 配置 Docker 镜像加速与 Cgroup 驱动 (K8s 推荐 systemd)

mkdir /etc/docker

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9p820w7.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

# 启动 Docker

systemctl enable docker && systemctl start docker安装 Kubeadm, Kubelet, Kubectl

# 添加阿里云 K8s 源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装指定版本 1.23.17 (1.23 系列较新的补丁版)

yum install -y kubelet-1.23.17 kubeadm-1.23.17 kubectl-1.23.17

# 设置开机自启

systemctl enable kubelet第二阶段:初始化集群(仅 Master 节点)

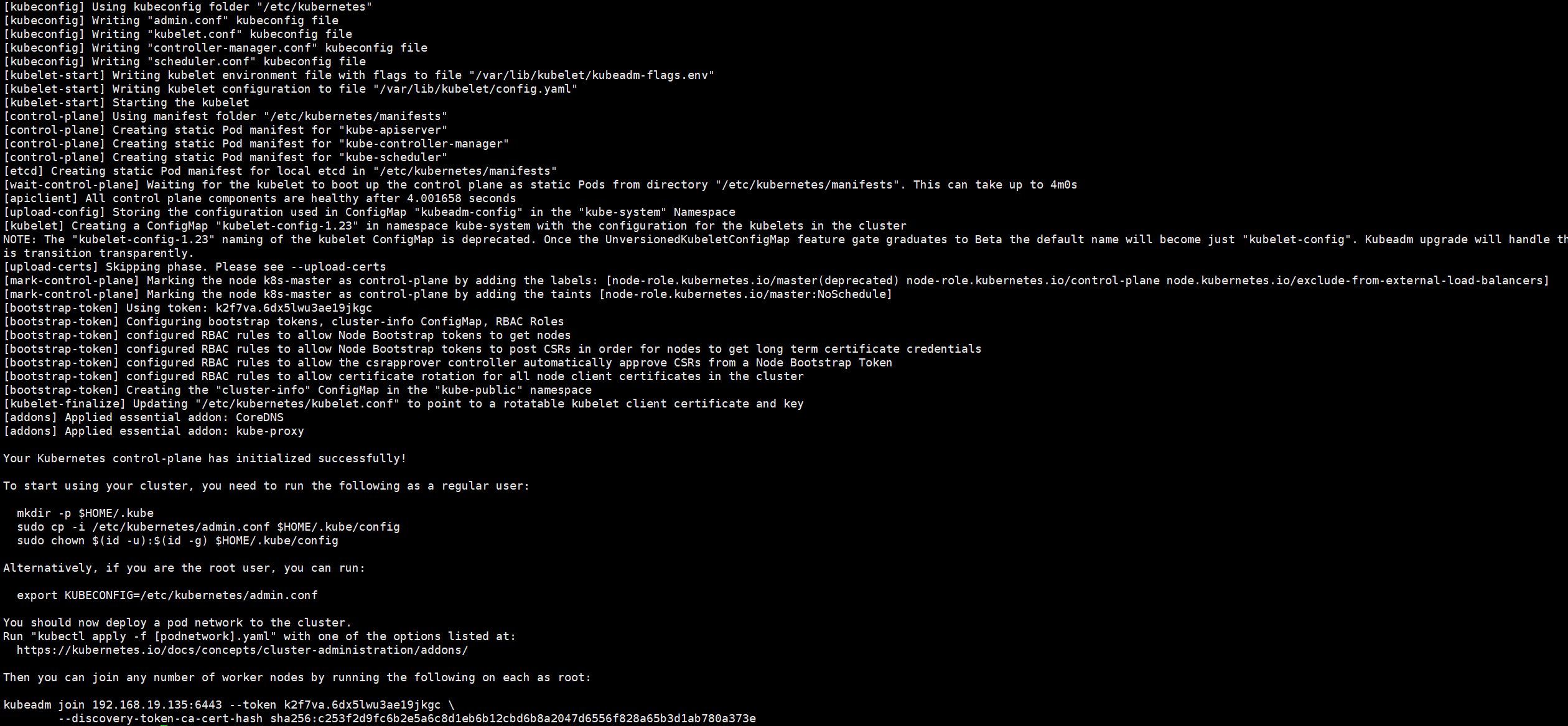

1. 初始化 Master

在 Master (192.168.19.135) 上执行:

kubeadm init \

--apiserver-advertise-address=192.168.19.135 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.17 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16出现该页面就是成功了

2. 配置 Kubectl

初始化成功后,按照终端提示执行:

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config3.加入集群

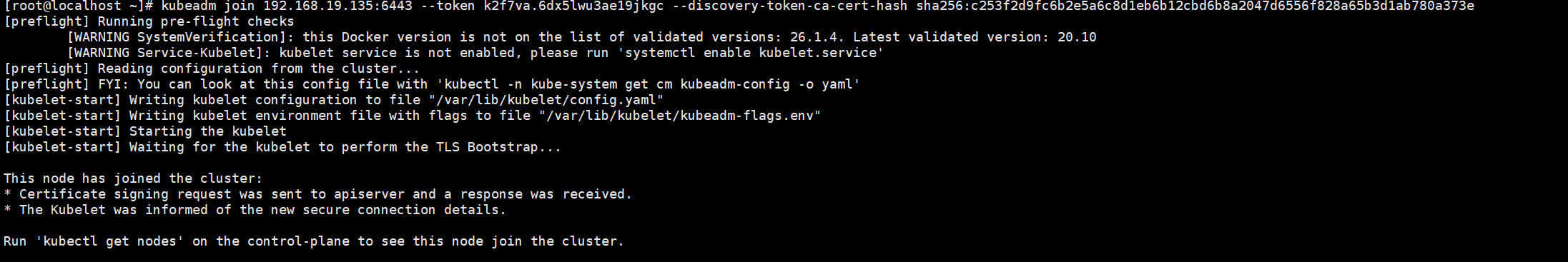

分别在 k8s-node1 和 k8s-node2 执行

# 下方命令可以在 k8s master 控制台初始化成功后复制 join 命令

kubeadm join 192.168.113.120:6443 --token w34ha2.66if2c8nwmeat9o7 --discovery-token-ca-cert-hash sha256:20e2227554f8883811c01edd850f0cf2f396589d32b57b9984de3353a7389477

# 如果初始化的 token 不小心清空了,可以通过如下命令获取或者重新申请

# 如果 token 已经过期,就重新申请

kubeadm token create

kubeadm token list# token 没有过期可以通过如下命令获取

# 获取 --discovery-token-ca-cert-hash 值,得到值后需要在前面拼接上 sha256:

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | \

openssl dgst -sha256 -hex | sed 's/^.* //'

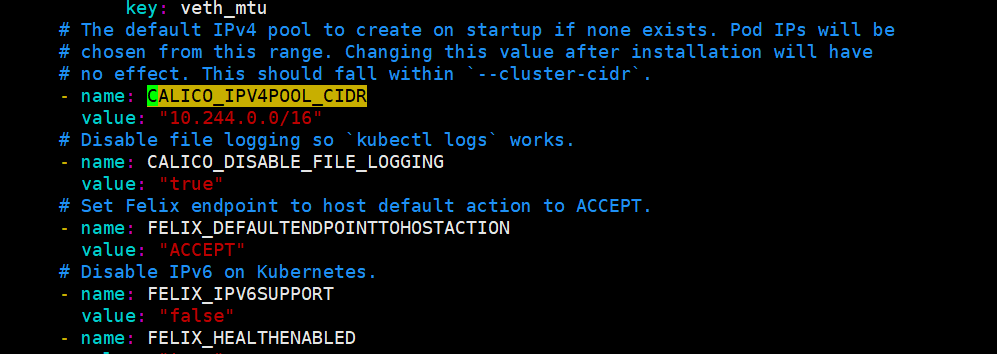

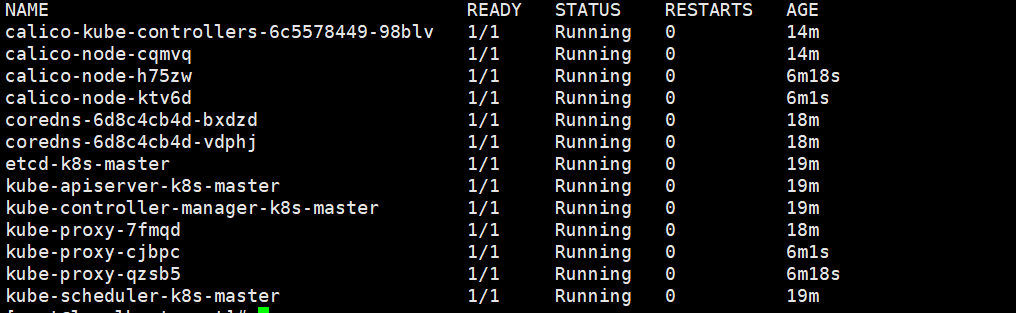

4. 安装网络插件 (Calico)

curl https://docs.projectcalico.org/manifests/calico.yaml -O修改 calico.yaml 文件中的 CALICO_IPV4POOL_CIDR 配置,修改为与初始化的 cidr 相同

删除镜像 docker.io/ 前缀,避免下载过慢导致失败

sed -i 's#docker.io/##g' calico.yaml然后安装插件

kubectl apply -f calico.yaml这里需要等待几分钟拉取镜像,如果失败就检查网络或者开魔法

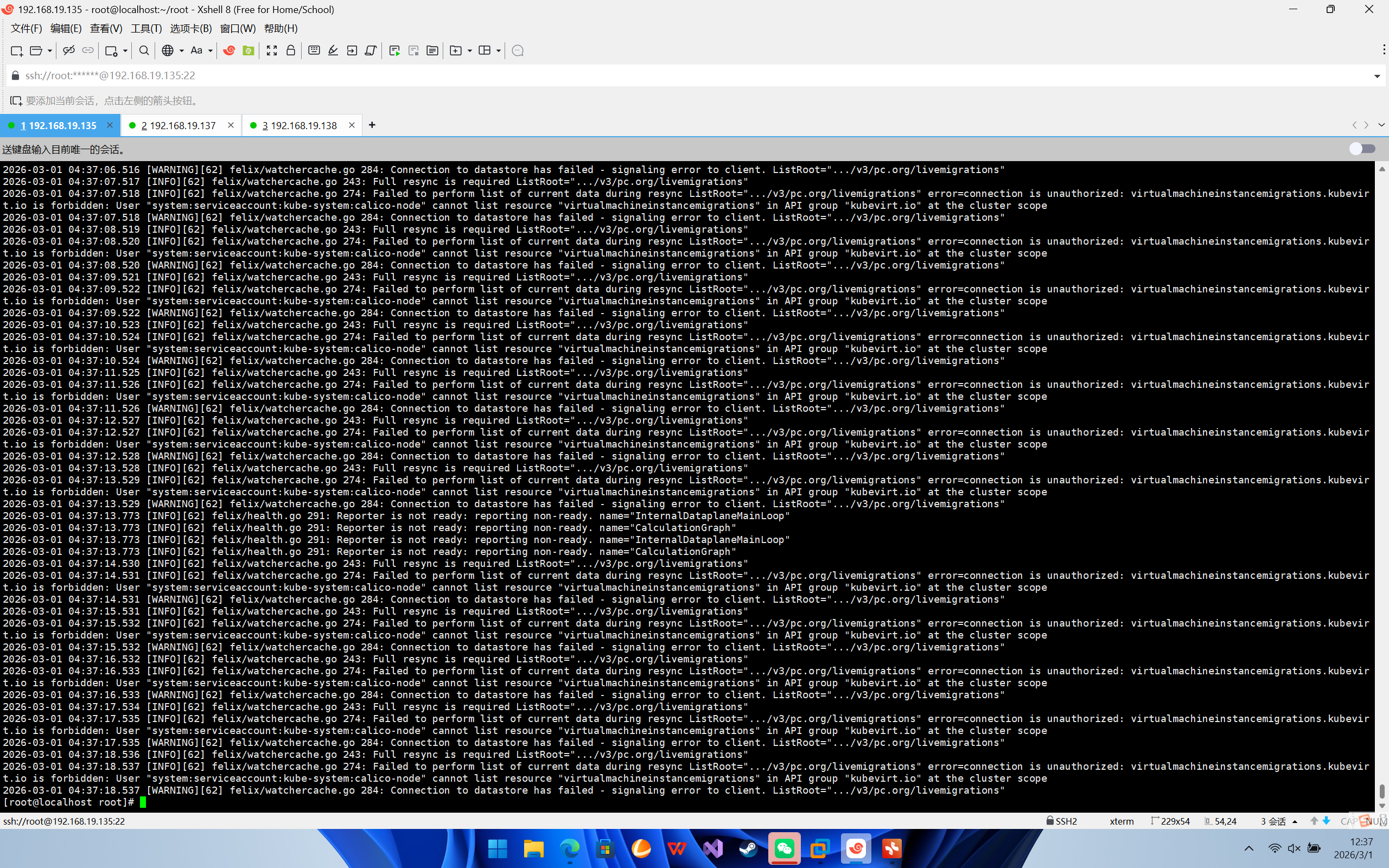

如果出现这种报错

简单来说,Calico 在启动时试图去监听 kubevirt.io(一个用于在 K8s 里跑虚拟机的组件)的资源,但是它没有相关的 RBAC 权限,导致陷入了无限报错和重启的死循环中,最终报告 Reporter is not ready。

修复过程如下:

第一步:修复 Calico 的 RBAC 权限限制(针对截图报错)

既然它要这个权限,我们给它补上就行了。

在你的 Master 节点上,执行以下命令编辑 Calico 的集群角色:

kubectl edit clusterrole calico-node在弹出的编辑界面中(按 i 进入输入模式),找到 rules: 这一段,在最底下添加以下几行(注意缩进对齐):

- apiGroups:

- kubevirt.io

resources:

- virtualmachineinstancemigrations

verbs:

- get

- list

- watch按 Esc 退出编辑模式,输入 :wq 保存并退出。

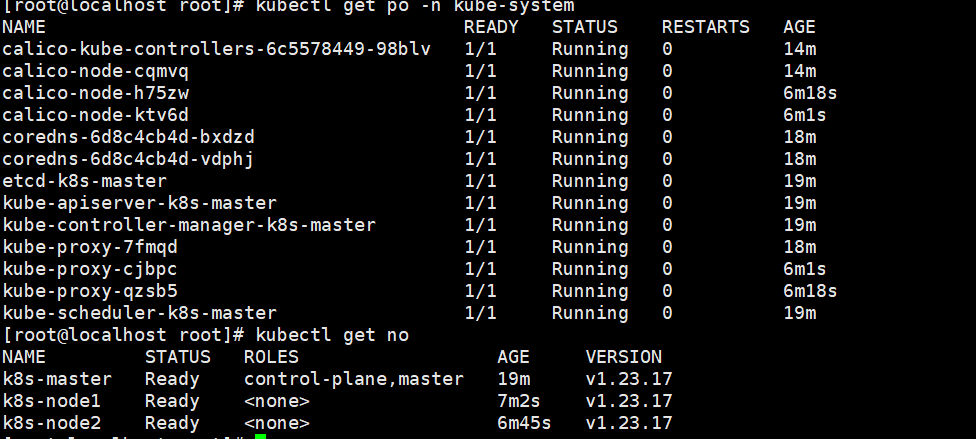

然后查看集群

kubectl get no

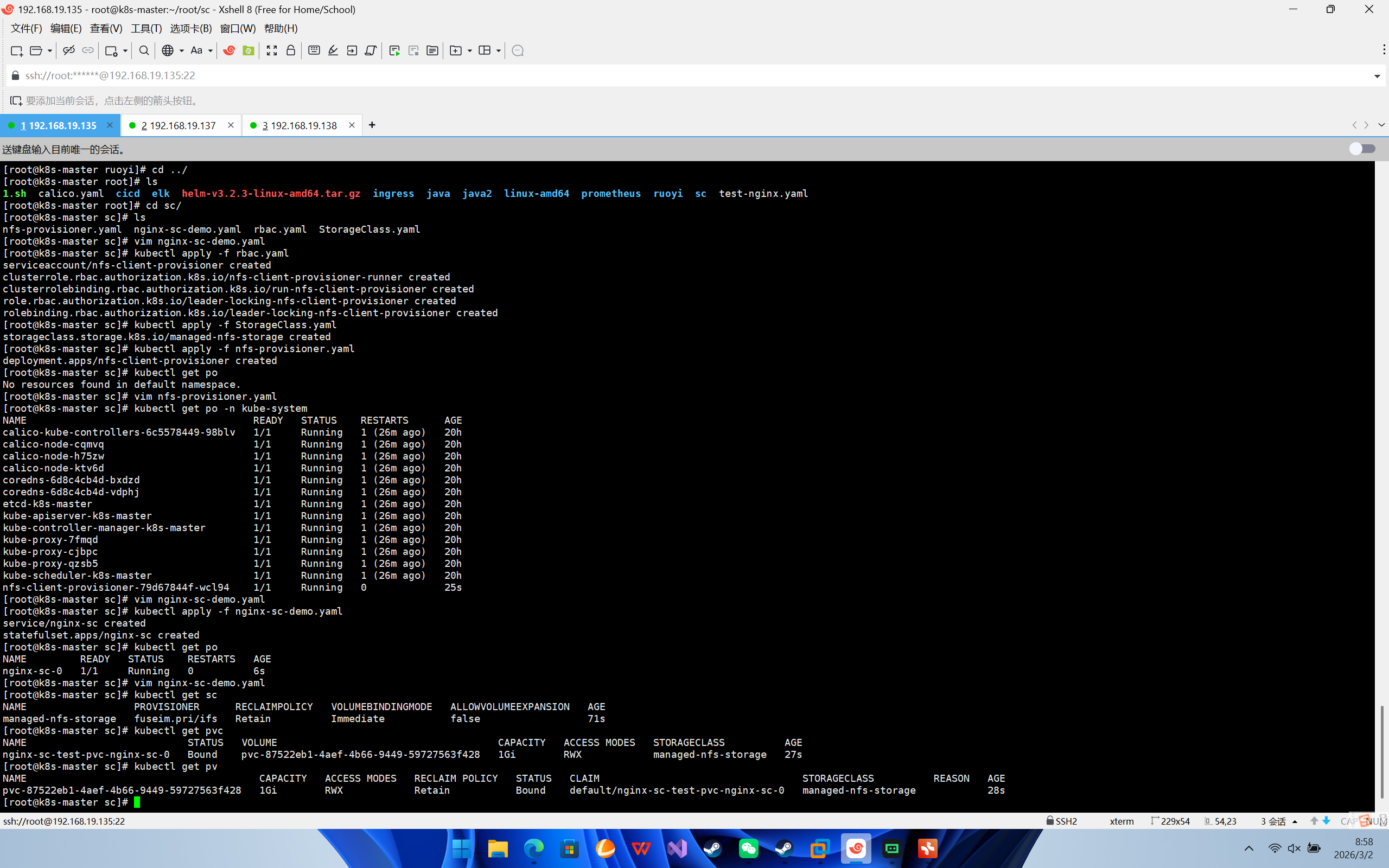

创建一个动态sc(需要提前安装nfs)

需要准备4个yaml配置

1.rbac权限,给sc授权

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

namespace: kube-system

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

namespace: kube-system

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: kube-system

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: kube-system

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: kube-system

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

sc配置

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

namespace: kube-system

provisioner: fuseim.pri/ifs # 外部制备器提供者,编写为提供者的名称

parameters:

archiveOnDelete: "false" # 是否存档,false 表示不存档,会删除 oldPath 下面的数据,true 表示存档,会重命名路径

reclaimPolicy: Retain # 回收策略,默认为 Delete 可以配置为 Retain

volumeBindingMode: Immediate # 默认为 Immediate,表示创建 PVC 立即进行绑定,只有 azuredisk 和 AWSelasticblockstore 支持其他值

制备器provisioner

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

namespace: kube-system

labels:

app: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/pylixm/nfs-subdir-external-provisioner:v4.0.0

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 192.168.19.135

- name: NFS_PATH

value: /data/nfs/rw

volumes:

- name: nfs-client-root

nfs:

server: 192.168.19.135

path: /data/nfs/rw

这里地址换成自己的ip

测试demo

apiVersion: v1

kind: Service

metadata:

name: nginx-sc

labels:

app: nginx-sc

spec:

type: NodePort

ports:

- name: web

port: 80

protocol: TCP

selector:

app: nginx-sc

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nginx-sc

spec:

replicas: 1

serviceName: "nginx-sc"

selector:

matchLabels:

app: nginx-sc

template:

metadata:

labels:

app: nginx-sc

spec:

containers:

- image: nginx

name: nginx-sc

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: /usr/share/nginx/html

name: nginx-sc-test-pvc

volumeClaimTemplates:

- metadata:

name: nginx-sc-test-pvc

spec:

storageClassName: managed-nfs-storage

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Gi依次apply -f

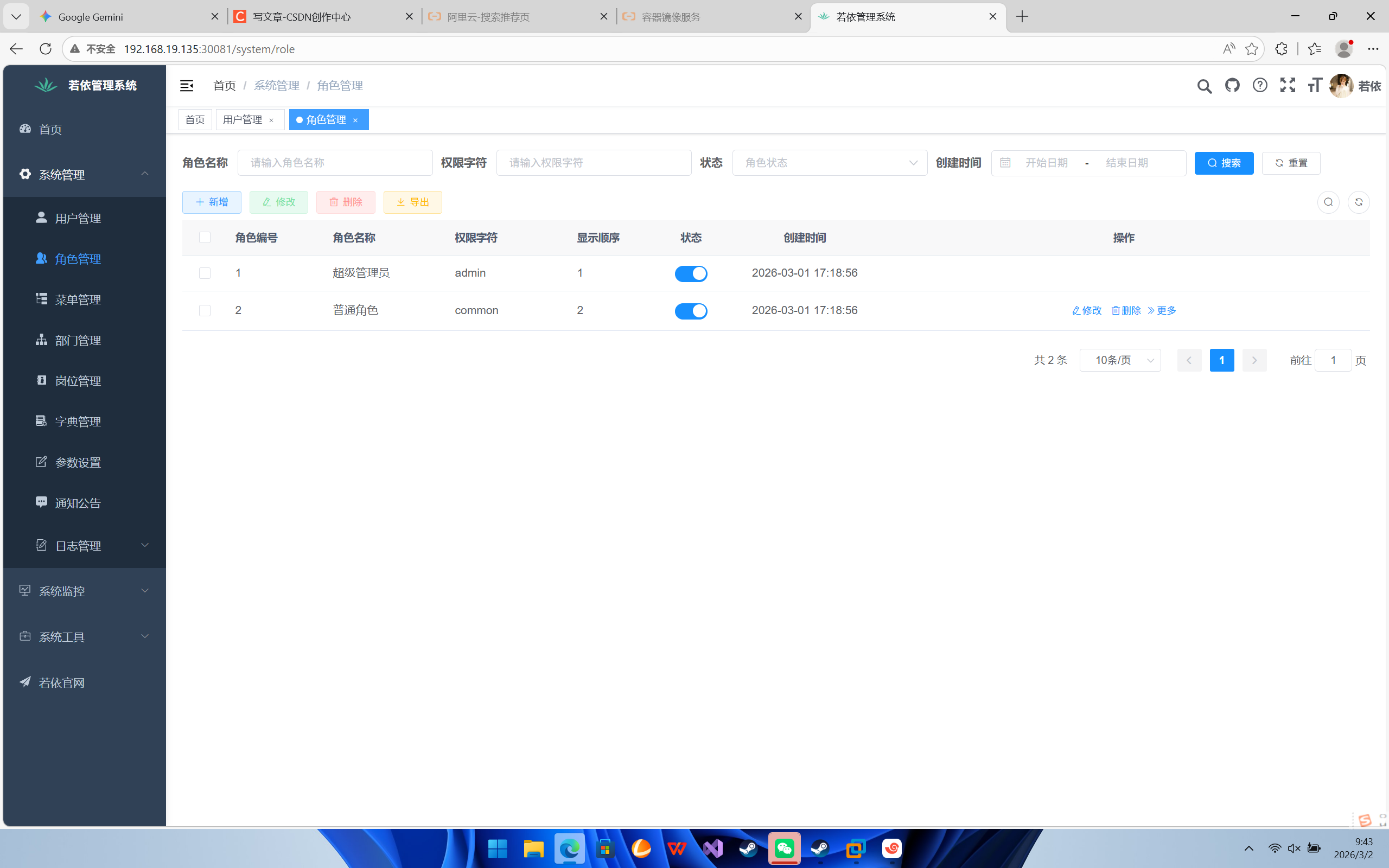

开始正式部署

先创建configmap,是若依源码中的两个sql

kubectl create configmap mysql-init-db \

--from-file=quartz.sql \

--from-file=ry_20250522.sql创建nginx配置

apiVersion: v1

kind: ConfigMap

metadata:

name: ruoyi-ui-config

data:

nginx.conf: |

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log notice;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

server {

listen 80;

server_name localhost;

# 开启 Gzip

gzip on;

gzip_min_length 1k;

gzip_comp_level 9;

gzip_types text/plain application/javascript application/x-javascript text/css application/xml text/javascript application/x-httpd-php image/jpeg image/gif image/png;

# 前端静态资源

location / {

root /usr/share/nginx/html;

try_files $uri $uri/ /index.html;

index index.html index.htm;

}

# 后端接口转发 (指向 K8s Service: ruoyi-admin)

location /prod-api/ {

proxy_set_header Host $http_host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header REMOTE-HOST $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_pass http://ruoyi-admin:8080/;

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html;

}

}

}

创建mysql

apiVersion: v1

kind: Service

metadata:

name: mysql

namespace: default

spec:

selector:

app: mysql

type: NodePort

ports:

- nodePort: 30006

port: 3306

---

# 3. Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql

namespace: default

spec:

selector:

matchLabels:

app: mysql

replicas: 1

template:

metadata:

labels:

app: mysql

spec:

initContainers:

- name: fix-permissions

image: busybox

command: ["sh", "-c", "chown -R 999:999 /var/lib/mysql"]

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql-data

containers:

- name: mysql

image: mysql:8.0

imagePullPolicy: IfNotPresent # 生产环境建议改回这个

args:

- --character-set-server=utf8mb4

- --collation-server=utf8mb4_unicode_ci

- --default-authentication-plugin=mysql_native_password

- --lower-case-table-names=1 # 若依必选

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

value: "password"

- name: MYSQL_DATABASE

value: "ry-vue"

readinessProbe:

exec:

command: ["mysqladmin", "ping", "-uroot", "-p$(MYSQL_ROOT_PASSWORD)"]

initialDelaySeconds: 60

periodSeconds: 10

timeoutSeconds: 5

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql-data

- name: init-sql

mountPath: /docker-entrypoint-initdb.d

volumes:

- name: data

persistentVolumeClaim:

claimName: mysql-pvc

- name: init-sql

configMap:

name: mysql-init-db

部署redis

---

# --- Redis Deployment ---

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

spec:

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

spec:

containers:

- name: redis

image: redis:latest

volumeMounts:

- name: redis-data

mountPath: /data

volumes:

- name: redis-data

persistentVolumeClaim:

claimName: redis-pvc

---

# --- Redis Service ---

apiVersion: v1

kind: Service

metadata:

name: redis

spec:

ports:

- port: 6379

selector:

app: redis

我的前后端镜像在之前的docker项目中已经构建好了,直接用就行

后端配置

apiVersion: apps/v1

kind: Deployment

metadata:

name: ruoyi-admin

spec:

replicas: 1

selector:

matchLabels:

app: ruoyi-admin

template:

metadata:

labels:

app: ruoyi-admin

spec:

containers:

- name: ruoyi-admin

image: ruoyi-admin:v1 # 你的镜像

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

env:

# 指向 K8s Service 'mysql'

- name: SPRING_DATASOURCE_DRUID_MASTER_URL

value: jdbc:mysql://mysql:3306/ry-vue?useUnicode=true&characterEncoding=utf8&zeroDateTimeBehavior=convertToNull&useSSL=true&serverTimezone=GMT%2B8

# 指向 K8s Service 'redis'

- name: SPRING_REDIS_HOST

value: "redis"

volumeMounts:

- name: upload-volume

mountPath: /home/ruoyi/uploadPath

volumes:

- name: upload-volume

persistentVolumeClaim:

claimName: ruoyi-upload-pvc

---

apiVersion: v1

kind: Service

metadata:

name: ruoyi-admin

spec:

ports:

- port: 8080

selector:

app: ruoyi-admin

前端配置

apiVersion: apps/v1

kind: Deployment

metadata:

name: ruoyi-ui

spec:

replicas: 1

selector:

matchLabels:

app: ruoyi-ui

template:

metadata:

labels:

app: ruoyi-ui

spec:

containers:

- name: ruoyi-ui

image: ruoyi/ruoyi-ui:v1 # 你的镜像

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

volumeMounts:

# 挂载 ConfigMap 覆盖 /etc/nginx/nginx.conf

- name: nginx-config

mountPath: /etc/nginx/nginx.conf

subPath: nginx.conf

volumes:

- name: nginx-config

configMap:

name: ruoyi-ui-config

---

apiVersion: v1

kind: Service

metadata:

name: ruoyi-ui

spec:

type: NodePort

ports:

- port: 80

targetPort: 80

nodePort: 30081

selector:

app: ruoyi-ui

依次启动后查看端口访问就行

部署Prometheus

1. 基础环境与权限 (01-monitor-base.yaml)

创建命名空间和 Prometheus 读取 K8s API 的权限。

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus-k8s

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources: ["nodes", "nodes/proxy", "services", "endpoints", "pods"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus-k8s

namespace: monitoring2. Prometheus 配置文件 (02-prometheus-config.yaml)

这里配置了自动发现规则:只要 Pod 有 prometheus.io/scrape: "true" 注解,就会被监控。

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

# 自动发现 K8s Pod (关键配置)

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)3. Prometheus 部署 (03-prometheus-deploy.yaml)

# 1. 申请存储 (动态SC)

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: prometheus-pvc

namespace: monitoring

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: managed-nfs-storage

resources:

requests:

storage: 20Gi

---

# 2. 部署 Prometheus

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

serviceAccountName: prometheus-k8s

initContainers:

- name: fix-permissions

image: busybox

# Prometheus 默认用户是 nobody (UID 65534)

command: ["sh", "-c", "chown -R 65534:65534 /prometheus"]

volumeMounts:

- name: data

mountPath: /prometheus

containers:

- name: prometheus

image: prom/prometheus:latest

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention.time=15d" # 数据保留15天

ports:

- containerPort: 9090

volumeMounts:

- name: config-volume

mountPath: /etc/prometheus

- name: data

mountPath: /prometheus

volumes:

- name: config-volume

configMap:

name: prometheus-config

- name: data

persistentVolumeClaim:

claimName: prometheus-pvc

---

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitoring

spec:

selector:

app: prometheus

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30090 # 访问端口4. Grafana 部署 (04-grafana-deploy.yaml)

# 1. 申请存储

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana-pvc

namespace: monitoring

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: managed-nfs-storage

resources:

requests:

storage: 5Gi

---

# 2. 部署 Grafana

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

initContainers:

- name: fix-permissions

image: busybox

command: ["sh", "-c", "chown -R 472:472 /var/lib/grafana"]

volumeMounts:

- name: data

mountPath: /var/lib/grafana

containers:

- name: grafana

image: grafana/grafana:latest

ports:

- containerPort: 3000

volumeMounts:

- name: data

mountPath: /var/lib/grafana

volumes:

- name: data

persistentVolumeClaim:

claimName: grafana-pvc

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: monitoring

spec:

selector:

app: grafana

type: NodePort

ports:

- port: 3000

targetPort: 3000

nodePort: 30030 # 访问端口5. 中间件导出器 (05-middleware-exporters.yaml)

包含 MySQL 和 Redis 的监控组件。 注意:这里假设你的 MySQL 密码是 password,如果不是请修改。

# 1. 创建一个存放 MySQL 密码的配置

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql-exporter-config

namespace: monitoring

data:

.my.cnf: |

[client]

user=root

password=password

# ↑↑↑ 如果你的 MySQL 密码不是 "password",请在这里修改 ↑↑↑

host=mysql.default.svc

port=3306

---

# 2. 部署 MySQL Exporter (挂载上面的配置)

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-exporter

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: mysql-exporter

template:

metadata:

labels:

app: mysql-exporter

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9104"

spec:

containers:

- name: mysql-exporter

image: prom/mysqld-exporter:latest

imagePullPolicy: IfNotPresent

args:

# 告诉它去读我们挂载的配置文件

- "--config.my-cnf=/etc/mysql-exporter/.my.cnf"

# 明确指定连接地址 (防止它连到 localhost)

- "--mysqld.address=mysql.default.svc:3306"

ports:

- containerPort: 9104

volumeMounts:

- name: config

mountPath: /etc/mysql-exporter

volumes:

- name: config

configMap:

name: mysql-exporter-config

---

# 3. Service (不变)

apiVersion: v1

kind: Service

metadata:

name: mysql-exporter

namespace: monitoring

spec:

selector:

app: mysql-exporter

ports:

- port: 9104

targetPort: 91046. 主机节点监控 (06-node-exporter.yaml)

DaemonSet 模式,自动监控所有 Node 的 CPU、内存、磁盘。

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitoring

spec:

selector:

matchLabels:

app: node-exporter

template:

metadata:

labels:

app: node-exporter

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9100"

spec:

hostNetwork: true

hostPID: true

containers:

- name: node-exporter

image: prom/node-exporter:latest

ports:

- containerPort: 9100

hostPort: 9100

volumeMounts:

- name: root

mountPath: /host/root

readOnly: true

volumes:

- name: root

hostPath:

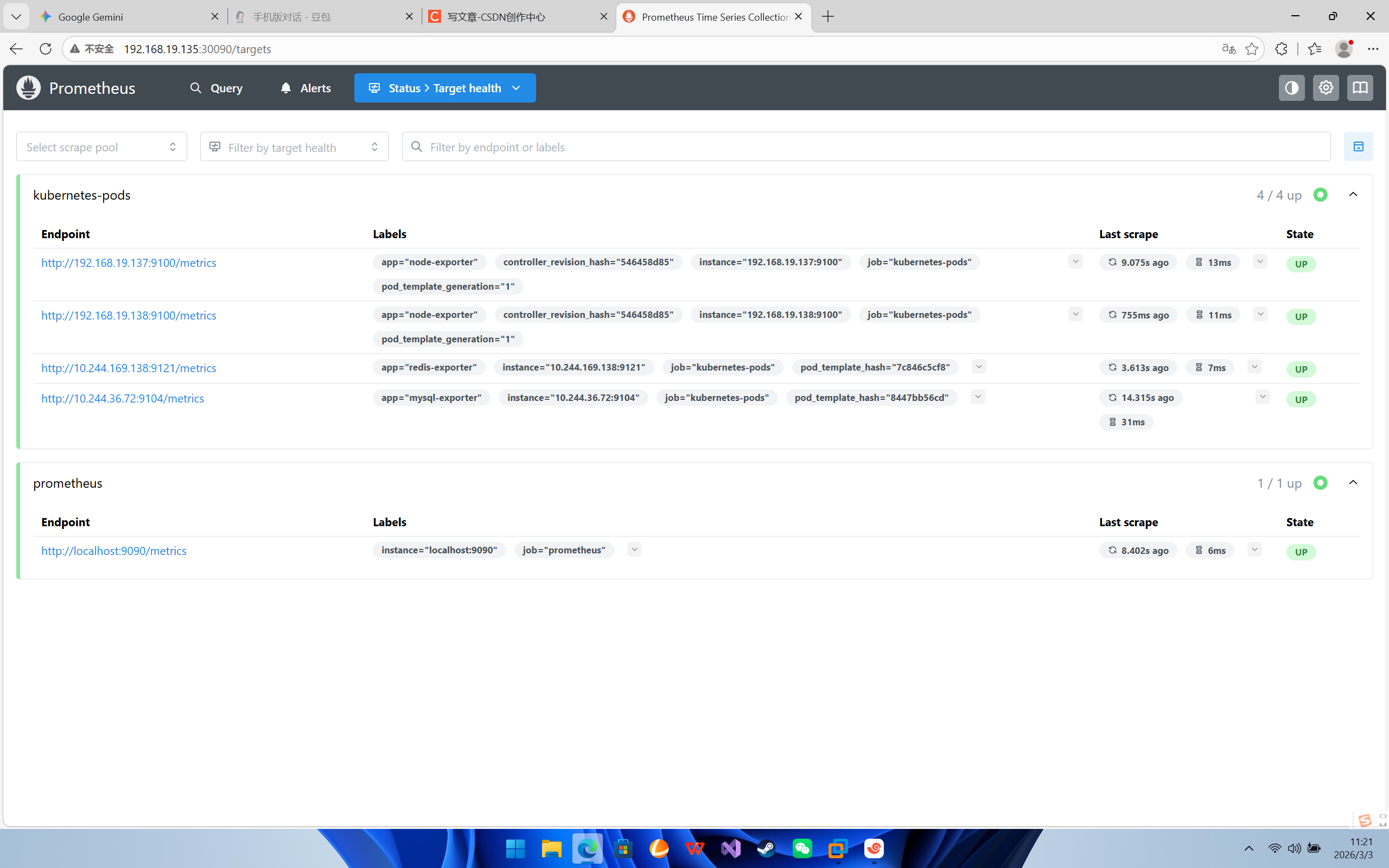

path: /依次启动,访问30090端口

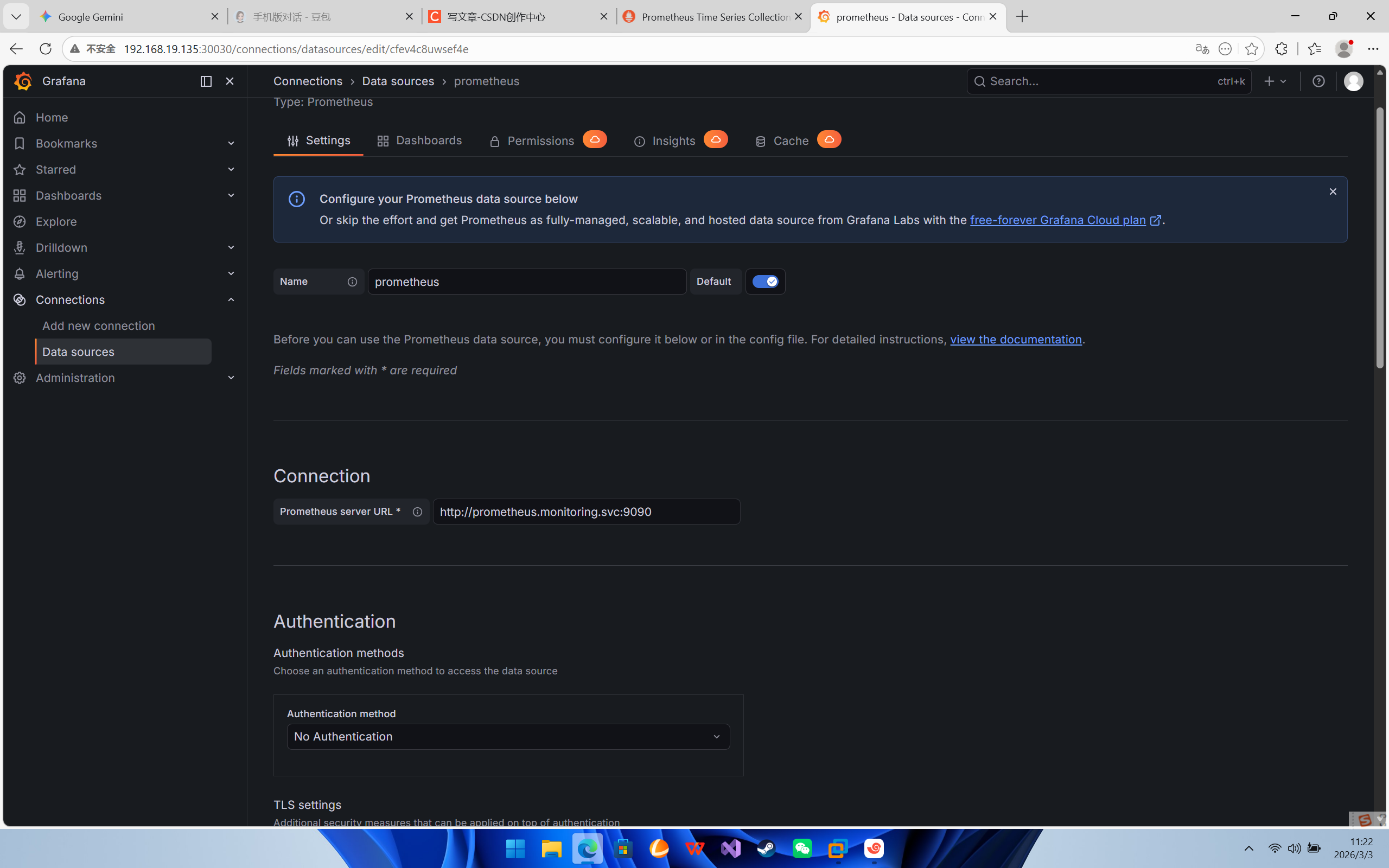

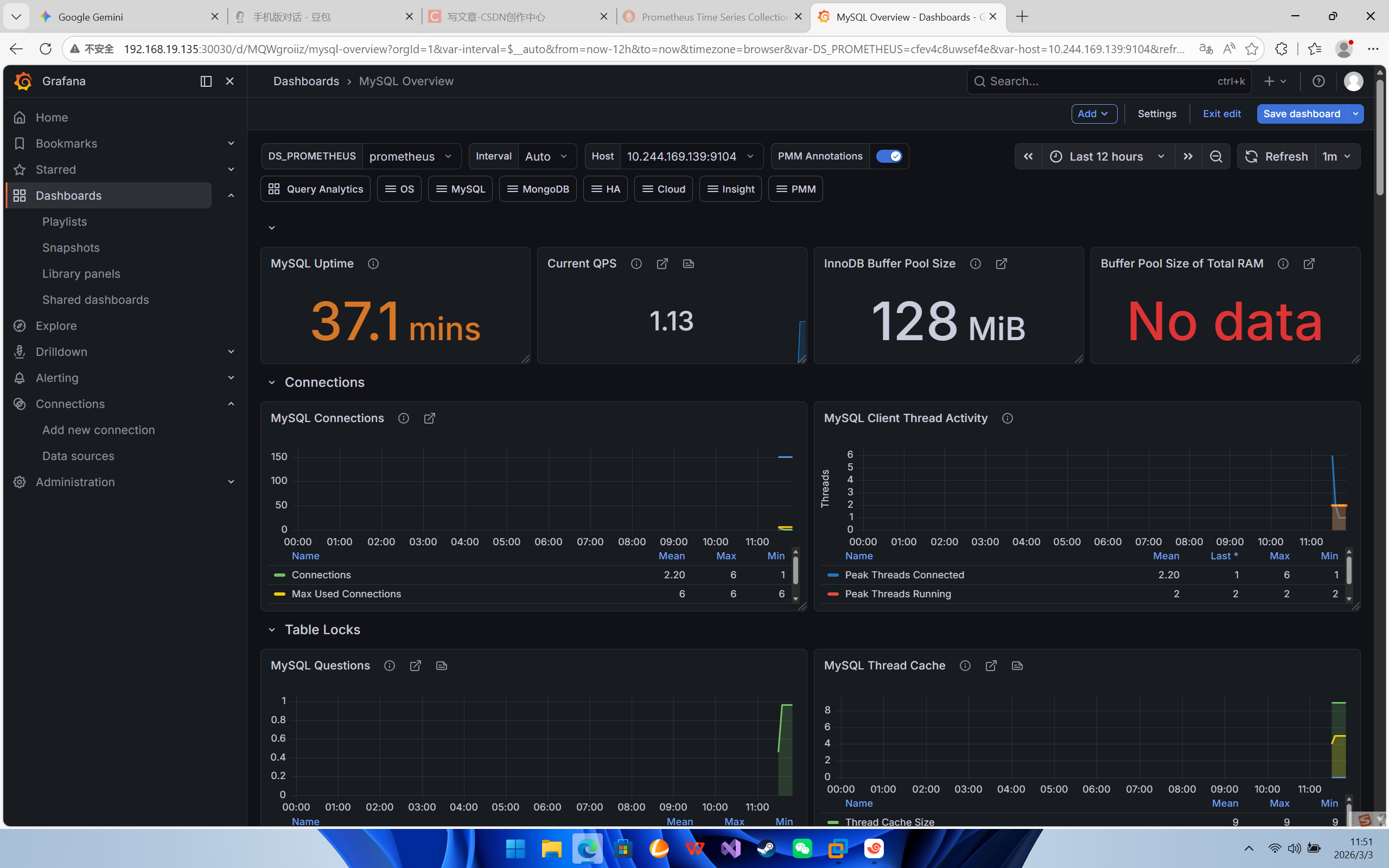

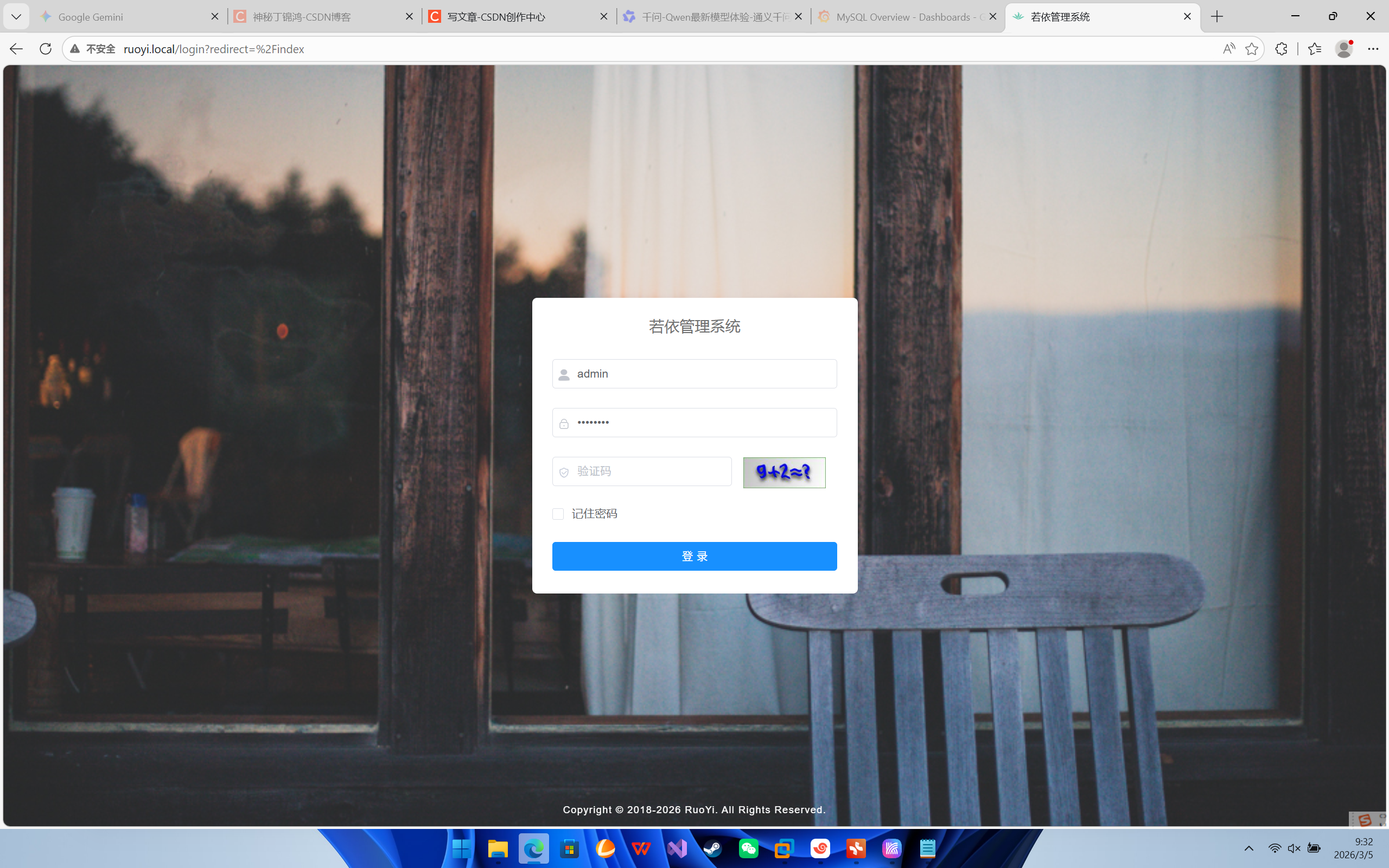

访问Grafana,配置监控数据源

配置 Grafana:

-

访问

http://<任意节点IP>:30030(账号 admin / admin)。 -

Add Data Source -> Prometheus -> URL 填

http://prometheus.monitoring.svc:9090。 -

Import Dashboard -> 输入 ID

7362(MySQL) 和763(Redis) 以及8919(Node Exporter)。

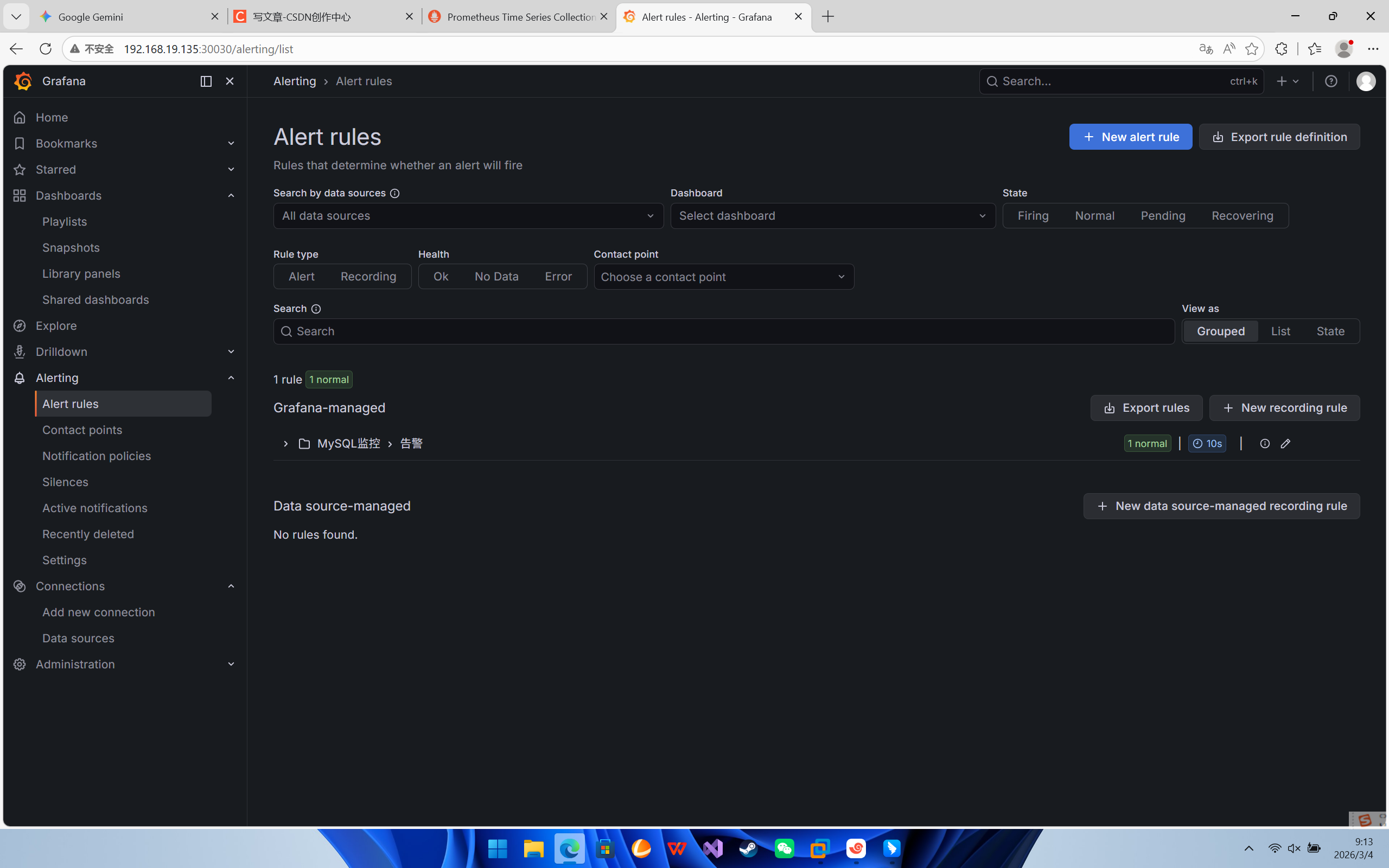

添加监控告警

第一步:准备告警接收方(以钉钉为例)

这是最常用的方式,不需要配置复杂的邮件服务器。

-

创建钉钉群:在钉钉里建一个群(或者拉 3 个人建群后踢掉 2 个)。

-

添加机器人:

-

点击群右上角设置 -> 智能群助手 -> 添加机器人。

-

选择 自定义 (通过 Webhook 接入)。

-

安全设置:选择 “自定义关键词”,输入关键词:

告警。 -

复制 Webhook 地址 (类似

https://oapi.dingtalk.com/robot/send?access_token=xxxx)。

-

第二步:在 Grafana 配置“联系点” (Contact Point)

告诉 Grafana 告警发给谁。

-

登录 Grafana (

http://monitor.local或 IP:Port)。 -

在左侧菜单栏选择 Alerting (铃铛图标) -> Contact points。

-

点击 + Add contact point。

-

填写信息:

-

Name:

DingTalk-Group -

Integration: 选择

DingTalk。 -

Url: 粘贴刚才复制的 钉钉 Webhook 地址。

-

MessageType:

Link(或者 ActionCard)。

-

-

点击保存。

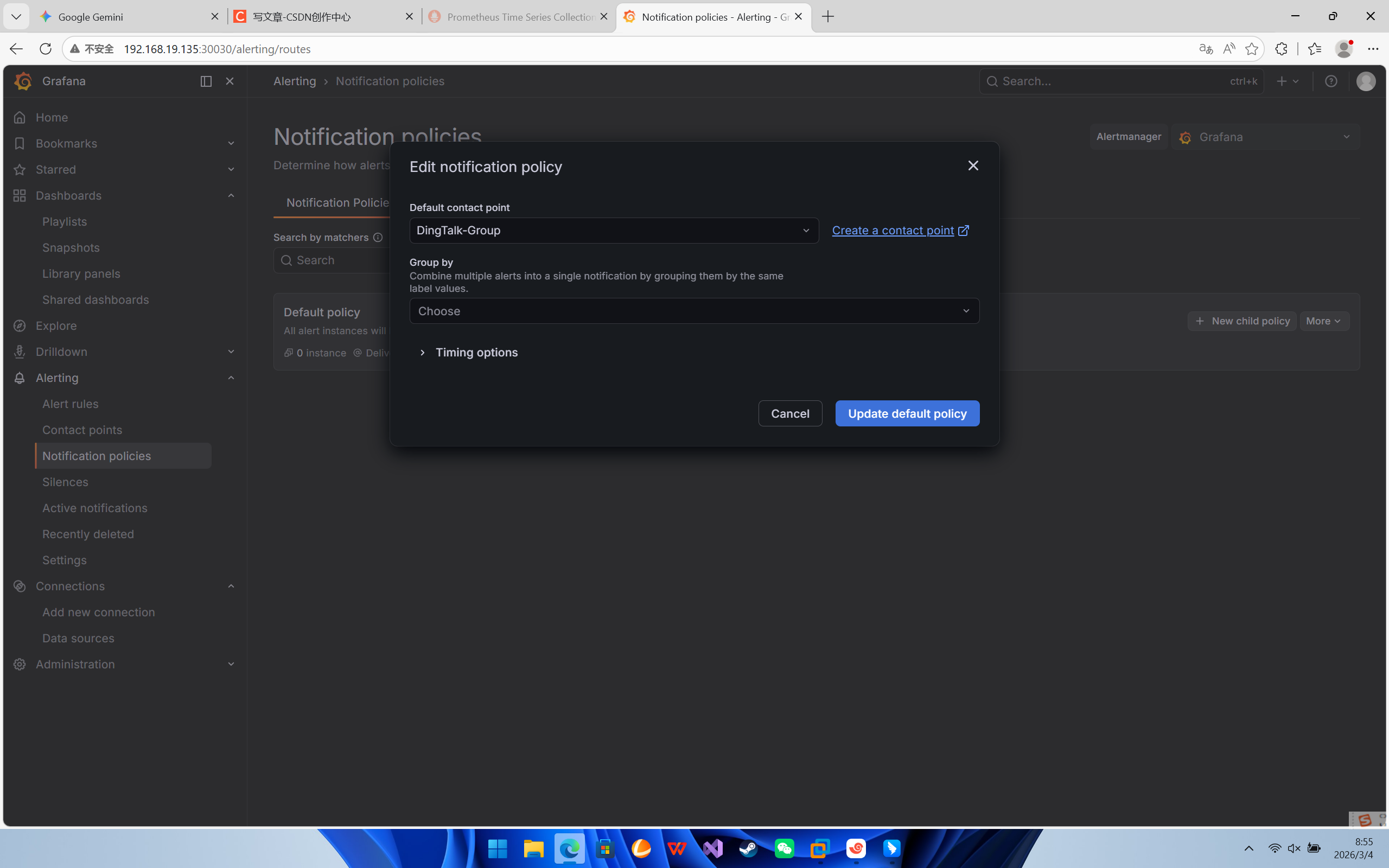

第三步:配置“通知策略” (Notification Policy)

告诉 Grafana 默认使用刚才的钉钉配置。

-

在左侧菜单栏选择 Alerting -> Notification policies。

-

找到 Default policy (根策略)。

-

点击 Edit (或者三个点 -> Edit)。

-

在 Contact point 下拉框中,选择刚才创建的

DingTalk-Group。 -

点击 Save policy。

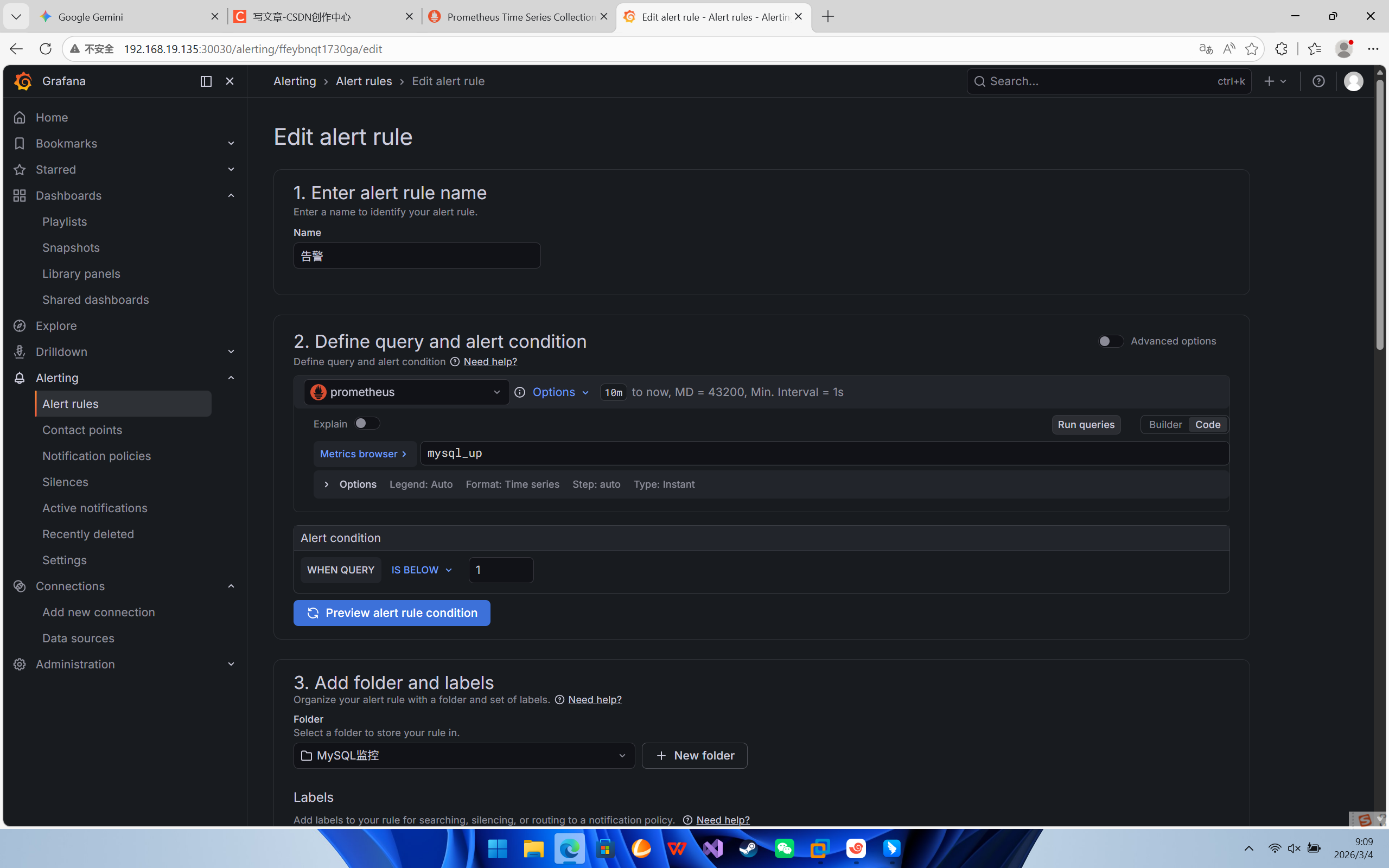

第四步:创建告警规则 (Alert Rule)

我们来配置一个规则:如果 MySQL 挂了,就报警。

-

在左侧菜单栏选择 Alerting -> Alert rules。

-

点击 + New alert rule。

1. Define query (定义查询)

-

Select data source: 选择

Prometheus。 -

Query A: 输入 PromQL 语句:mysql_up

-

Run queries: 点击运行,你应该能看到一条线(值为 1)。

2. Define condition (定义条件)

-

Condition:

Reduce->Last(取最新值)。 -

Threshold (阈值):

-

Input B (刚才的 Reduce 结果)

-

Is below (低于)

-

1 (解释:如果最新值低于 1,也就是变成了 0,就触发告警)

-

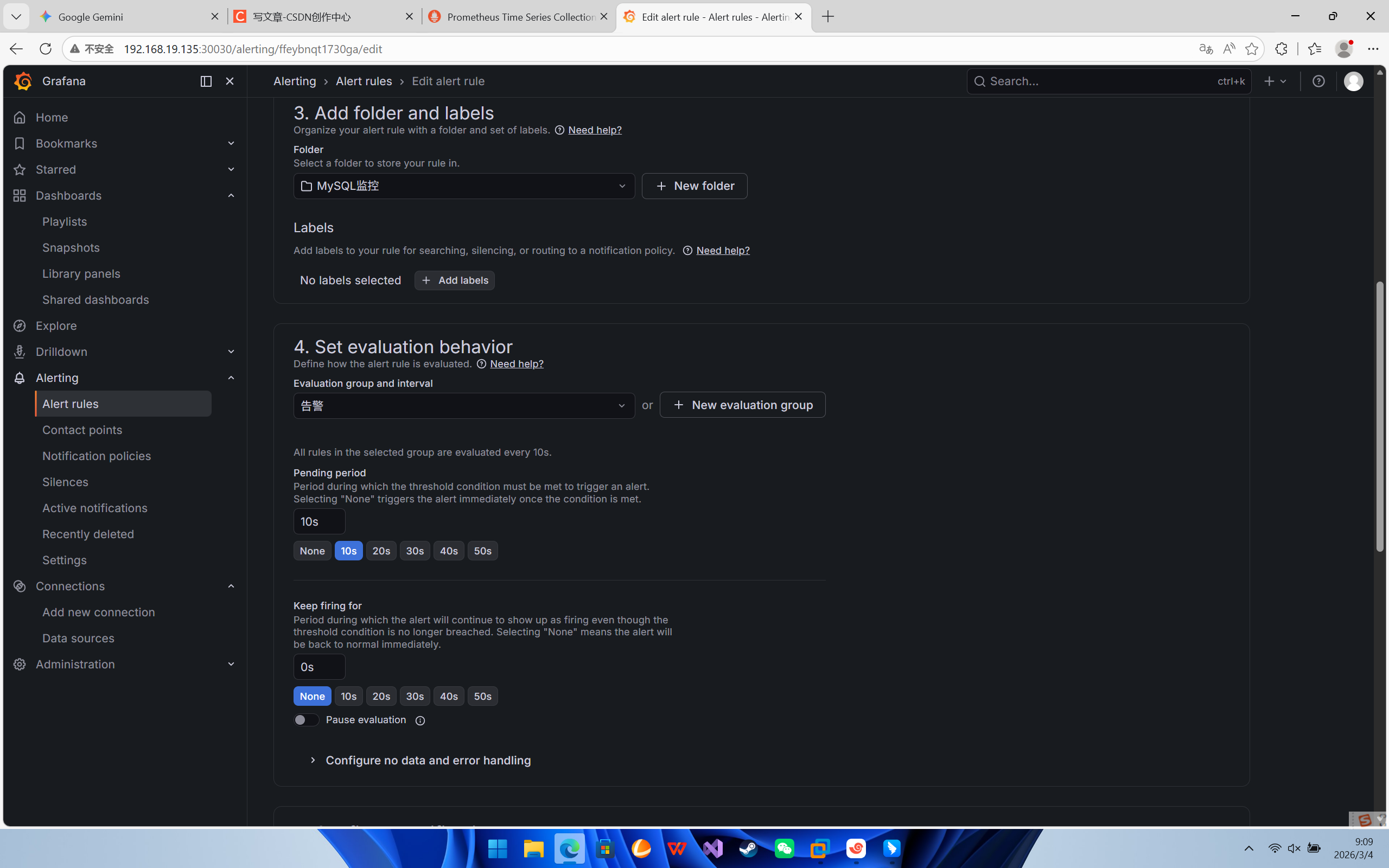

3. Set evaluation behavior (设置评估行为)

-

Folder: 点击

+ Create new folder,名字叫告警。 -

Group: 输入

Critical-Group。 -

Evaluation interval:

1m(每 1 分钟检查一次)。 -

Pending period:

1m(持续 1 分钟异常才报警,防止网络抖动误报)。

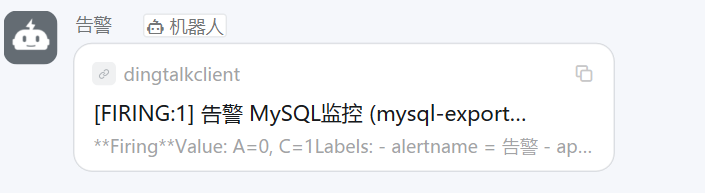

第四步:验证告警

为了验证是否生效,我们手动搞挂 MySQL(生产环境请勿随意操作,测试环境可用):

手动缩容 MySQL (模拟宕机):

-

等待:

-

等待约 1-2 分钟(Prometheus 抓取周期 + Grafana 评估周期)。

-

你会看到 Grafana 里的规则状态变红 (

Firing)。

-

-

查收钉钉:

-

你的钉钉群应该会收到一条机器人发来的告警消息。

-

-

-

恢复 MySQL:

kubectl scale deploy mysql --replicas=1

部署主从数据库

# 1. 基础配置 ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql-config

namespace: default

data:

my.cnf: |

[mysqld]

default-authentication-plugin=mysql_native_password

character-set-server=utf8mb4

collation-server=utf8mb4_unicode_ci

skip-name-resolve

lower-case-table-names=1

datadir=/var/lib/mysql

log-bin=mysql-bin

binlog_format=ROW

expire_logs_days=7

---

# 2. Headless Service (用于 StatefulSet 内部通信)

apiVersion: v1

kind: Service

metadata:

name: mysql-headless

namespace: default

labels:

app: mysql

spec:

ports:

- port: 3306

name: mysql

clusterIP: None

selector:

app: mysql

---

# 3. 写入 Service (只连主库 mysql-0)

apiVersion: v1

kind: Service

metadata:

name: mysql-write

namespace: default

spec:

type: NodePort

ports:

- port: 3306

targetPort: 3306

nodePort: 30006

selector:

statefulset.kubernetes.io/pod-name: mysql-0

---

# 4. 读取 Service (只连从库 mysql-1)

apiVersion: v1

kind: Service

metadata:

name: mysql-read

namespace: default

spec:

type: NodePort

ports:

- port: 3306

targetPort: 3306

nodePort: 30008

selector:

statefulset.kubernetes.io/pod-name: mysql-1

---

# 5. StatefulSet (核心修改部分)

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

namespace: default

spec:

serviceName: "mysql-headless"

replicas: 2

selector:

matchLabels:

app: mysql

template:

metadata:

labels:

app: mysql

spec:

initContainers:

- name: init-mysql

image: mysql:5.7

command:

- bash

- "-c"

- |

set -ex

# 【修改点】直接使用 $HOSTNAME 变量,不需要 hostname 命令

[[ $HOSTNAME =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

echo [mysqld] > /mnt/conf.d/server-id.cnf

echo server-id=$((ordinal+1)) >> /mnt/conf.d/server-id.cnf

if [[ $ordinal -eq 1 ]]; then

echo "read_only=1" >> /mnt/conf.d/server-id.cnf

fi

# 确保有权限

chown -R 999:999 /var/lib/mysql

chown -R 999:999 /var/lib/mysql

securityContext:

runAsUser: 0 # 需要 root 权限来 chown

volumeMounts:

- name: data

mountPath: /var/lib/mysql

- name: conf

mountPath: /mnt/conf.d

# ▼▼▼ 主容器 ▼▼▼

containers:

- name: mysql

image: mysql:5.7

env:

# 【关键修改 1】自动创建数据库,解决 Unknown database 报错

- name: MYSQL_DATABASE

value: "ry-vue"

- name: MYSQL_ROOT_PASSWORD

value: "password"

# 【注意】不要在这里加 command,否则会覆盖官方镜像的初始化脚本

ports:

- containerPort: 3306

name: mysql

volumeMounts:

- name: data

mountPath: /var/lib/mysql

# 挂载 my.cnf

- name: config-map

mountPath: /etc/mysql/my.cnf

subPath: my.cnf

# 挂载动态生成的 server-id 配置

- name: conf

mountPath: /etc/mysql/conf.d

# 【关键修改 2】挂载 SQL 脚本 ConfigMap 到初始化目录

- name: init-sql-volume

mountPath: /docker-entrypoint-initdb.d

volumes:

- name: config-map

configMap:

name: mysql-config

- name: conf

emptyDir: {}

# 【关键修改 3】引用第一步创建的 SQL ConfigMap

- name: init-sql-volume

configMap:

name: mysql-init-db

# 自动申请 PVC

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: managed-nfs-storage # 请确保这里是你集群里真实的 SC 名称

resources:

requests:

storage: 10Gi

清理旧环境(防止冲突)

kubectl delete deployment mysql-master mysql-slave mysql

kubectl delete svc mysql mysql-master mysql-slave mysql-write mysql-read

kubectl delete pvc -l app=mysql # 慎重!这会删掉之前的数据我这里没有什么数据就不备份导出了

kubectl exec -n <命名空间> <源Pod名称> -- mysqldump -u<用户名> -p<密码> <数据库名> > data.sql

cat data.sql | kubectl exec -i -n <命名空间> <目标Pod名称> -- mysql -u<用户名> -p<密码> <数据库名>Pods 启动后,它们还是独立的,需要我们手动“连线”

配置主库 (mysql-0)

# 进入 mysql-0

kubectl exec -it mysql-0 -- mysql -uroot -ppassword

-- 创建复制账号

CREATE USER 'repl'@'%' IDENTIFIED WITH mysql_native_password BY 'password';

GRANT REPLICATION SLAVE ON *.* TO 'repl'@'%';

FLUSH PRIVILEGES;

-- 查看 Master 状态 (记下 File 和 Position)

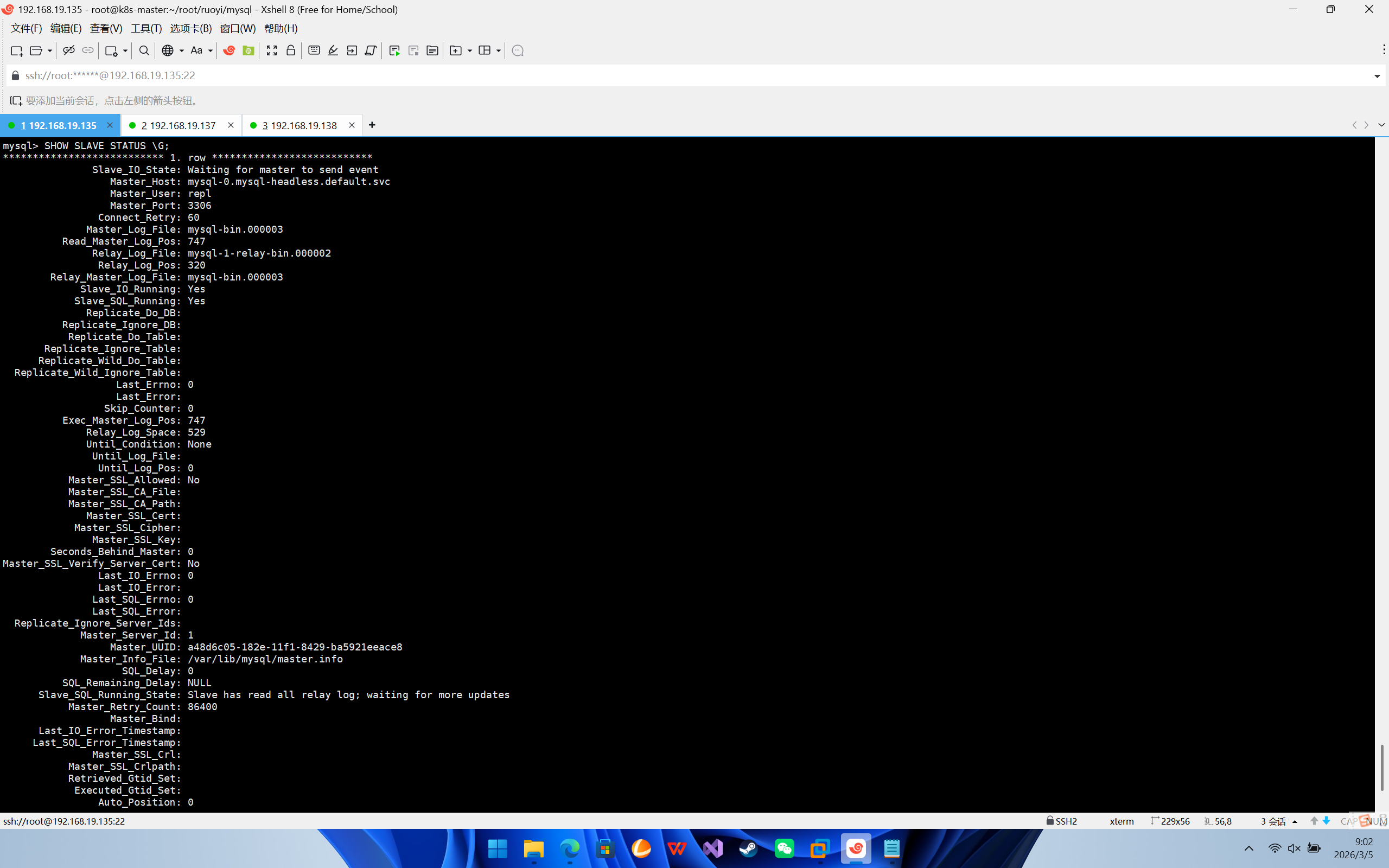

SHOW MASTER STATUS;配置从库 (mysql-1)

# 退出 mysql-0,进入 mysql-1

exit

kubectl exec -it mysql-1 -- mysql -uroot -ppassword

-- 停止同步

STOP SLAVE;

-- 配置连接 (注意 host 填 headless service 的域名)

CHANGE MASTER TO

MASTER_HOST='mysql-0.mysql-headless.default.svc',

MASTER_USER='repl',

MASTER_PASSWORD='password',

MASTER_LOG_FILE='mysql-bin.000001', -- 填刚才查到的

MASTER_LOG_POS=157; -- 填刚才查到的

-- 启动同步

START SLAVE;

-- 验证状态 (必须两个 Yes)

SHOW SLAVE STATUS \G;

因为我们把原本的 Service mysql 删掉了,换成了更专业的 mysql-write (主) 和 mysql-read (从)。

原来的后端代码(RuoYi-Admin)和监控组件(MySQL Exporter)还在傻傻地找 mysql 这个名字,它们会因为找不到地址而报错。

修改 RuoYi 后端配置

# ...

env:

- name: SPRING_DATASOURCE_DRUID_MASTER_URL

# ❌ 旧的写法 (会报错):

# value: jdbc:mysql://mysql:3306/ry-vue?useUnicode=true...

# ✅ 新的写法 (连接主库 Service):

value: jdbc:mysql://mysql-write:3306/ry-vue?useUnicode=true&characterEncoding=utf8&zeroDateTimeBehavior=convertToNull&useSSL=true&serverTimezone=GMT%2B8

# ...2. 修改 MySQL 监控 (Exporter)

监控组件也需要知道新数据库的地址,否则 Prometheus 抓不到数据。

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql-exporter-config

namespace: monitoring

data:

.my.cnf: |

[client]

user=root

password=password

# ❌ 旧地址:

# host=mysql.default.svc

# ✅ 新地址 (连接主库):

host=mysql-write.default.svc

port=3306

# ... (Deployment 部分的 args 也要检查一下)# ...

args:

- "--config.my-cnf=/etc/mysql-exporter/.my.cnf"

# ✅ 确保这里也指向主库 Service

- "--mysqld.address=mysql-write.default.svc:3306"

# ...安装ingress

安装helm

wget https://get.helm.sh/helm-v3.2.3-linux-amd64.tar.gz

解压(tar -zxvf helm-v3.10.2-linux-amd64.tar.gz)

将解压目录下的 helm 程序移动到 usr/local/bin/helm

# 添加阿里云仓库,命名为 aliyun

helm repo add aliyun https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

# 更新仓库列表,确保能获取到最新的 chart 索引

helm repo update# 添加仓库

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

# 查看仓库列表

helm repo list

# 搜索 ingress-nginx

helm search repo ingress-nginx

# 下载安装包

helm pull ingress-nginx/ingress-nginx

# 将下载好的安装包解压

tar xf ingress-nginx-xxx.tgz

# 解压后,进入解压完成的目录

cd ingress-nginx

# 修改 values.yaml

镜像地址:修改为国内镜像

registry: registry.cn-hangzhou.aliyuncs.com

image: google_containers/nginx-ingress-controller

image: google_containers/kube-webhook-certgen

tag: v1.3.0

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

修改部署配置的 kind: DaemonSet

nodeSelector:

ingress: "true" # 增加选择器,如果 node 上有 ingress=true 就部署

将 admissionWebhooks.enabled 修改为 false

将 service 中的 type 由 LoadBalancer 修改为 ClusterIP,如果服务器是云平台才用 LoadBalancer

# 为 ingress 专门创建一个 namespace

kubectl create ns ingress-nginx

# 为需要部署 ingress 的节点上加标签

kubectl label node k8s-node1 ingress=true

# 安装 ingress-nginx

helm install ingress-nginx ./ingress-nginx -n ingress-nginx如果出现Error: template: ingress-nginx/templates/controller-role.yaml:48:9: executing "ingress-nginx/templates/controller-role.yaml" at <ne (index .Values.controller.extraArgs "update-status") "false">: error calling ne: invalid type for comparison

请在你的 extraArgs 部分按如下方式修改:

extraArgs:

update-status: "false" 删掉{},添加update-status: "false"

然后我们就可以创建ingress

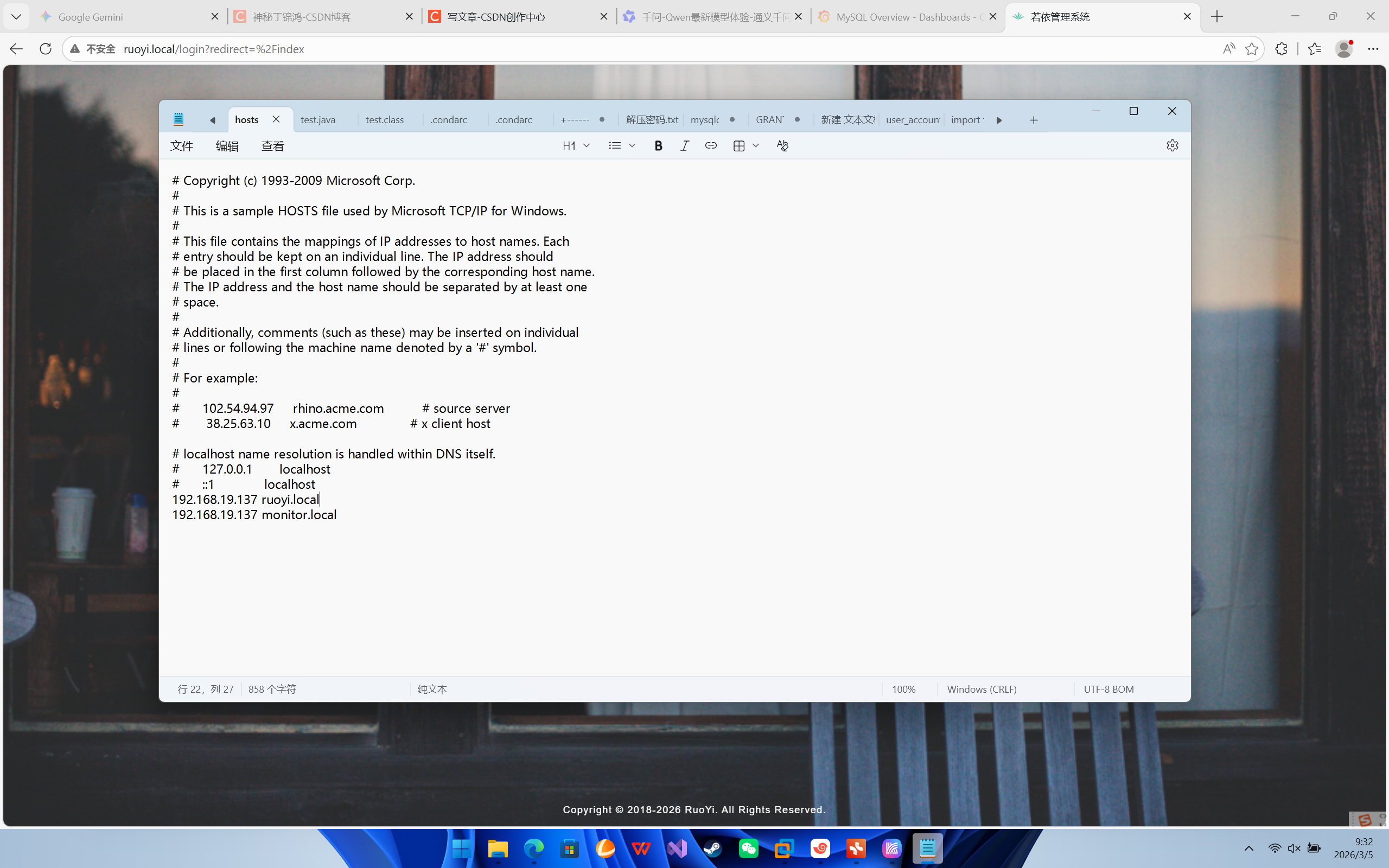

首先我们先创建若依的ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ruoyi-ingress

namespace: default # 你的若依部署在 default

annotations:

# 指定使用 nginx 控制器

kubernetes.io/ingress.class: "nginx"

# 【关键】开启重写功能

nginx.ingress.kubernetes.io/rewrite-target: /$2

# 允许上传大文件 (默认只有1m,传头像会报错)

nginx.ingress.kubernetes.io/proxy-body-size: "50m"

# 开启跨域 (若依前后端分离通常需要)

nginx.ingress.kubernetes.io/enable-cors: "true"

spec:

rules:

- host: ruoyi.local

http:

paths:

# 1. 后端接口路由:把 /prod-api/xxx 转成 /xxx 发给后端

- path: /prod-api(/|$)(.*)

pathType: ImplementationSpecific

backend:

service:

name: ruoyi-admin # 你的后端 Service 名字

port:

number: 8080 # 后端容器端口

# 2. 前端页面路由:其他所有请求发给前端 Nginx

- path: /()(.*)

pathType: ImplementationSpecific

backend:

service:

name: ruoyi-ui # 你的前端 Service 名字

port:

number: 80 # 前端容器端口

监控ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: grafana-ingress

namespace: monitoring # 【注意】你的 Prometheus/Grafana 所在空间

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: monitor.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: grafana # 你的 grafana service 名字

port:

number: 3000

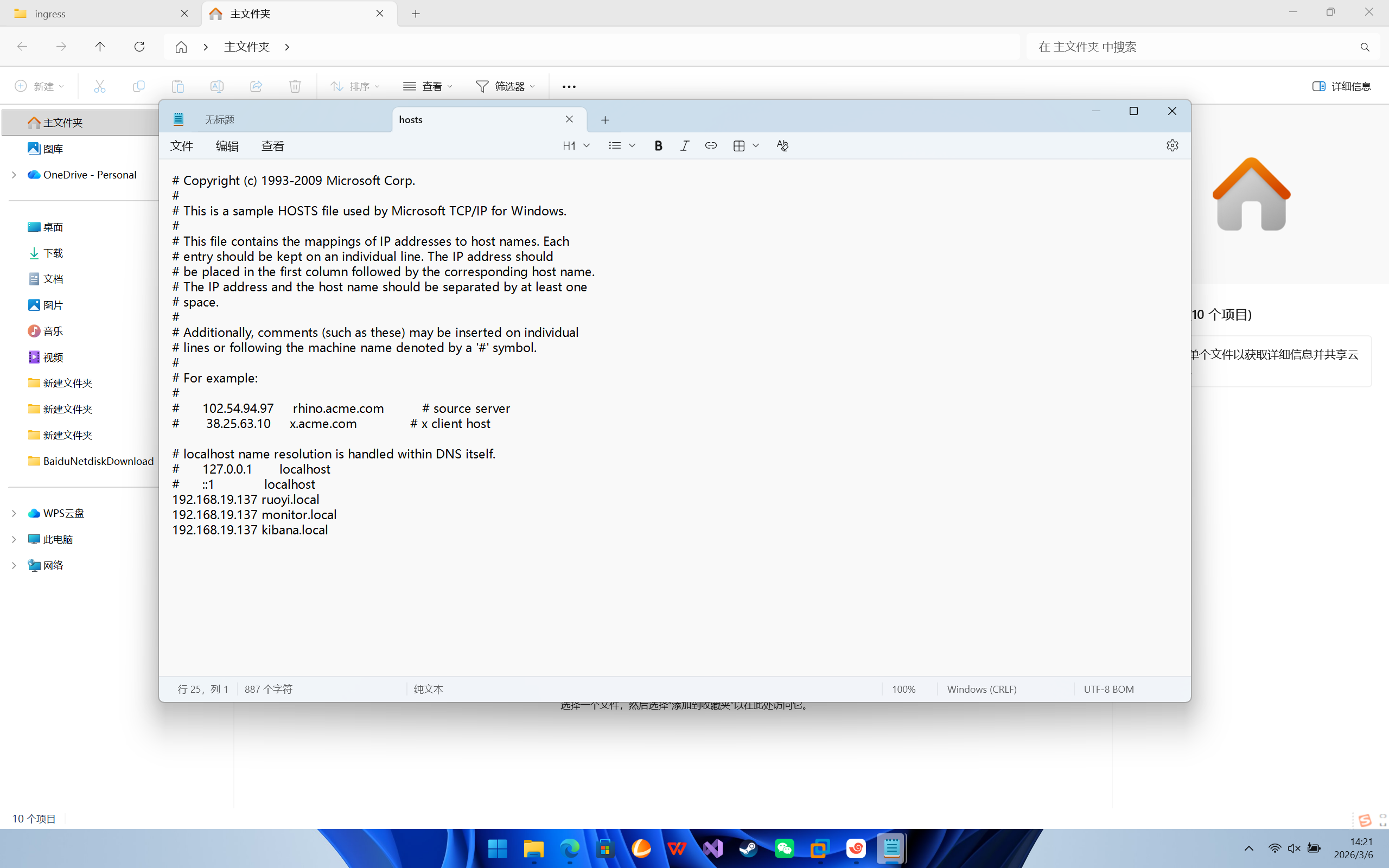

因为我是给node1打了标签,所以本地hosts解析时写的是node1的ip

部署elk+filebeat

第一步:创建命名空间

kubectl create ns logging第二步:部署 Elasticsearch

# 1. Headless Service

apiVersion: v1

kind: Service

metadata:

name: elasticsearch

namespace: logging

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

clusterIP: None

ports:

- port: 9200

name: rest

- port: 9300

name: inter-node

---

# 2. StatefulSet

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch

namespace: logging

spec:

serviceName: elasticsearch

replicas: 1

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

# ▼▼▼ 1. 关键:ES 必须要改系统参数 vm.max_map_count ▼▼▼

initContainers:

- name: increase-vm-max-map

image: busybox

command: ["sysctl", "-w", "vm.max_map_count=262144"]

securityContext:

privileged: true

- name: fix-permissions

image: busybox

command: ["sh", "-c", "chown -R 1000:1000 /usr/share/elasticsearch/data"]

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

containers:

- name: elasticsearch

image: elasticsearch:7.17.9

imagePullPolicy: IfNotPresent

env:

- name: discovery.type

value: "single-node" # 单机模式

- name: ES_JAVA_OPTS

value: "-Xms256m -Xmx256m" # 限制内存,防止撑爆

ports:

- containerPort: 9200

name: client

- containerPort: 9300

name: transport

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

# 3. 动态申请存储

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: managed-nfs-storage # 你的 SC 名字

resources:

requests:

storage: 10Gi

第三步:部署 Logstash

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-config

namespace: logging

data:

logstash.conf: |

input {

beats {

port => 5044

}

}

filter {

# 1. 【去噪】

if [message] =~ "kube-probe" or [message] =~ "HealthCheck" {

drop {}

}

# 2. 【解析】Spring Boot 日志

grok {

match => {

"message" => "(?m)^%{TIMESTAMP_ISO8601:log_time}\s+%{LOGLEVEL:log_level}\s+%{NUMBER:pid}\s+---\s+\[%{DATA:thread_name}\]\s+%{JAVACLASS:class_name}\s+:\s+%{GREEDYDATA:log_content}"

}

}

# 3. 【时间校准】

date {

match => ["log_time", "yyyy-MM-dd HH:mm:ss.SSS"]

target => "@timestamp"

timezone => "Asia/Shanghai"

}

# 4. 【清理】

if "_grokparsefailure" not in [tags] {

mutate {

remove_field => ["log_time", "message"]

}

}

}

output {

elasticsearch {

hosts => ["elasticsearch:9200"]

index => "k8s-logs-%{+YYYY.MM.dd}"

}

# 调试打开下面这行,可以在 kubectl logs 里看到解析结果

# stdout { codec => rubydebug }

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: logstash

namespace: logging

spec:

replicas: 1

selector:

matchLabels:

app: logstash

template:

metadata:

labels:

app: logstash

annotations:

prometheus.io/scrape: "false"

spec:

containers:

# --- 主容器: Logstash ---

- name: logstash

image: logstash:7.17.9

imagePullPolicy: IfNotPresent

ports:

- containerPort: 5044

env:

- name: LS_JAVA_OPTS

value: "-Xms512m -Xmx512m"

- name: LOGSTASH_STDOUT_CODEC

value: "json"

resources:

requests:

cpu: 100m

memory: 512Mi

limits:

cpu: 500m

memory: 1Gi

# 探针

livenessProbe:

tcpSocket:

port: 5044

initialDelaySeconds: 120

periodSeconds: 20

readinessProbe:

tcpSocket:

port: 5044

initialDelaySeconds: 60

periodSeconds: 10

volumeMounts:

- name: config

mountPath: /usr/share/logstash/pipeline/logstash.conf

subPath: logstash.conf

volumes:

- name: config

configMap:

name: logstash-config

defaultMode: 0644

---

apiVersion: v1

kind: Service

metadata:

name: logstash

namespace: logging

spec:

type: NodePort

selector:

app: logstash

ports:

- name: beats

port: 5044

targetPort: 5044

nodePort: 30044

# 删除了 metrics 端口

第四步:部署 Kibana

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: logging

spec:

replicas: 1

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

containers:

- name: kibana

image: kibana:7.17.9

imagePullPolicy: IfNotPresent

env:

- name: ELASTICSEARCH_HOSTS

value: "http://elasticsearch:9200" # 连内部 ESi

- name: I18N_LOCALE

value: "zh-CN" # 开启中文界面

ports:

- containerPort: 5601

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: logging

spec:

type: NodePort

selector:

app: kibana

ports:

- port: 5601

targetPort: 5601

nodePort: 30601 # 对外访问端口

第五步:部署 Filebeat

# 1. 权限配置 (RBAC)

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: logging

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: filebeat

rules:

- apiGroups: [""] # "" indicates the core API group

resources:

- namespaces

- pods

- nodes

verbs:

- get

- watch

- list

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: logging

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

# 2. ConfigMap (采集规则)

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: logging

data:

filebeat.yml: |

filebeat.inputs:

- type: container

paths:

- /var/log/containers/*.log

processors:

- add_kubernetes_metadata:

host: ${NODE_NAME}

matchers:

- logs_path:

logs_path: "/var/log/containers/"

output.logstash:

hosts: ["logstash:5044"]

---

# 3. DaemonSet (采集器)

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat

namespace: logging

spec:

selector:

matchLabels:

app: filebeat

template:

metadata:

labels:

app: filebeat

spec:

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

dnsPolicy: ClusterFirstWithHostNet

hostNetwork: true # 建议开启,方便采集宿主机网络

containers:

- name: filebeat

image: elastic/filebeat:7.17.9

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

securityContext:

runAsUser: 0 # 必须 root 才能读宿主机日志

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: data

mountPath: /usr/share/filebeat/data

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

- name: varlog

mountPath: /var/log

readOnly: true

volumes:

- name: config

configMap:

name: filebeat-config

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers # 如果你的 docker 目录改过,这里要改

- name: varlog

hostPath:

path: /var/log

- name: data

hostPath:

path: /var/lib/filebeat-data

type: DirectoryOrCreate

依次启动

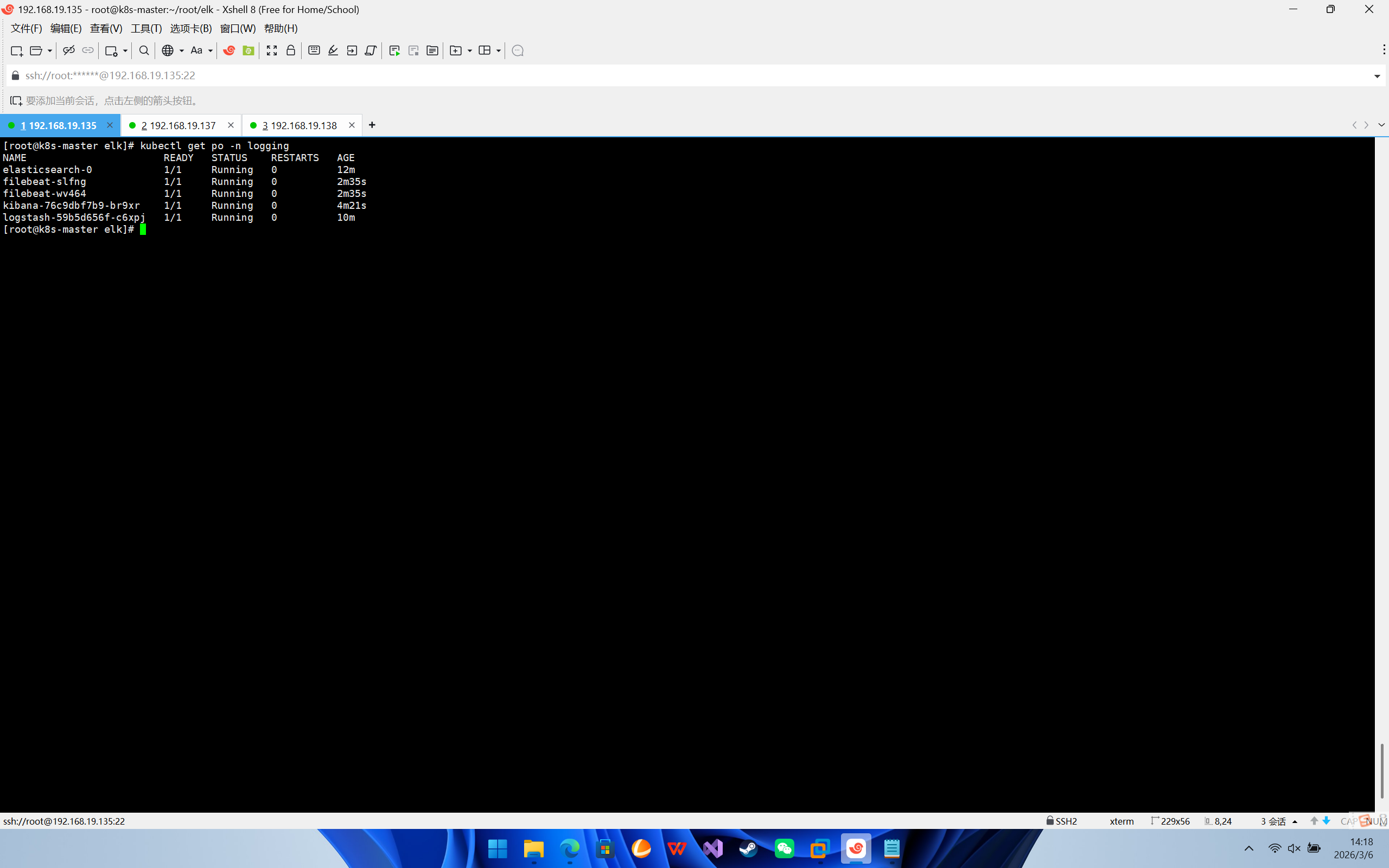

第六步:验证与使用

-

检查 Pod 状态

kubectl get pods -n logging

等待所有 Pod (es, logstash, kibana, filebeat) 都变成

Running。ES 启动比较慢,可能需要 1-2 分钟。 -

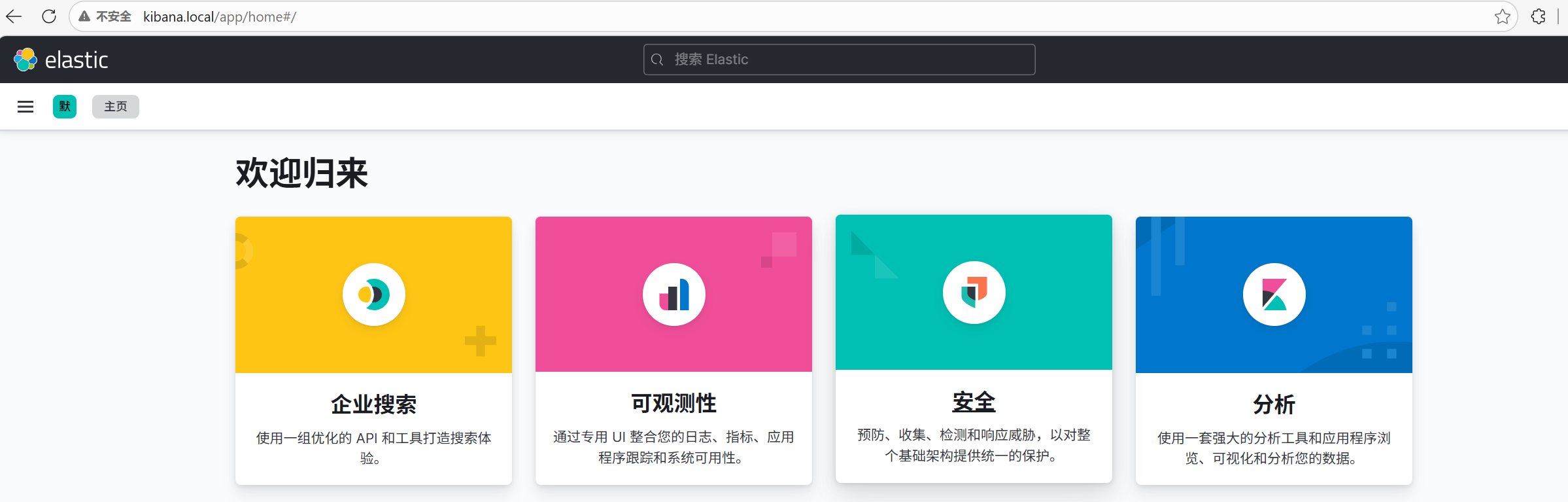

访问 Kibana: 浏览器打开:

http://<你的节点IP>:30601 -

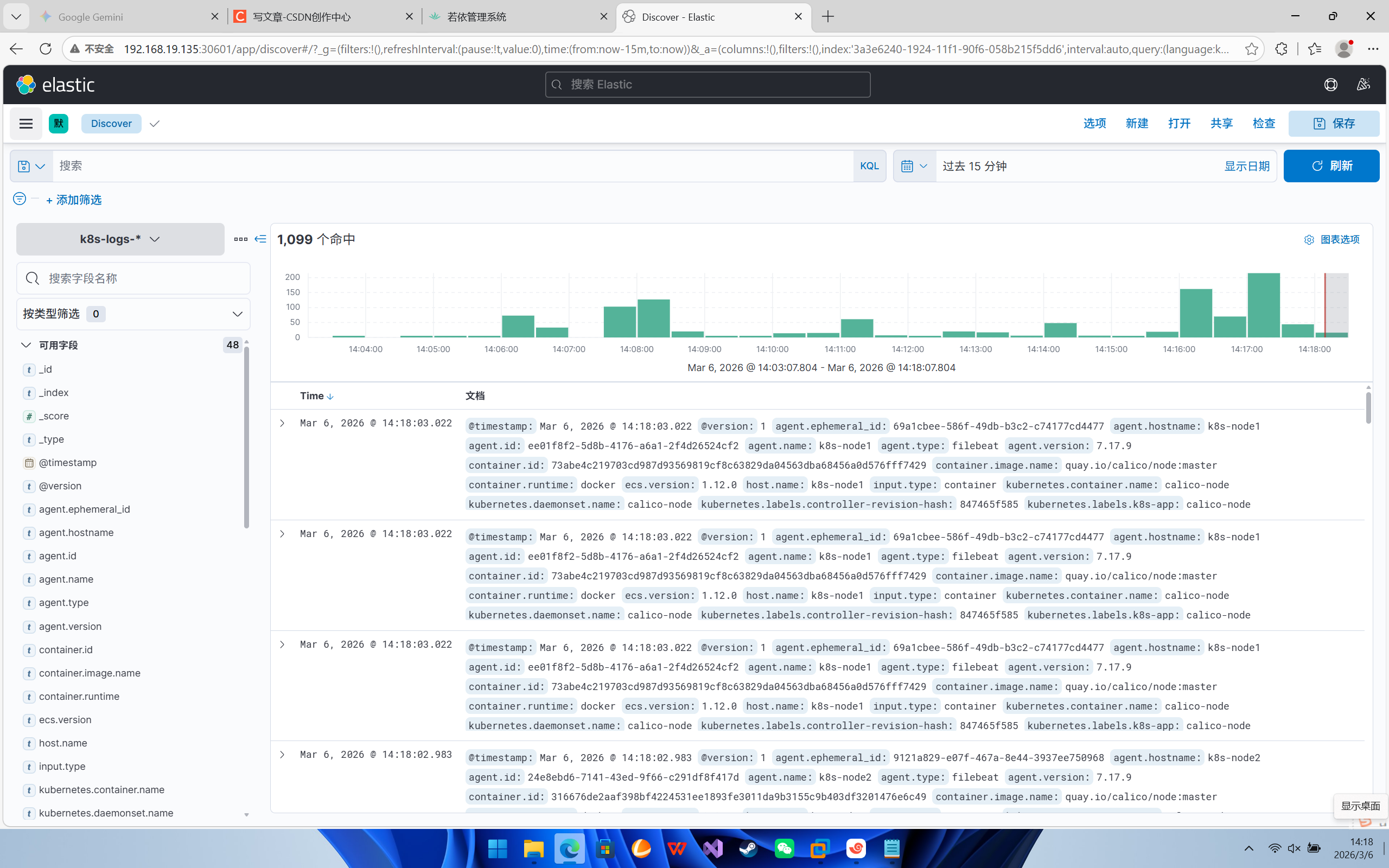

配置 Kibana (首次访问):

-

进入首页后,点击左侧菜单的 "Stack Management" (通常在最下面)。

-

点击 "Index Patterns" -> "Create index pattern"。

-

你应该能看到已经在右侧列出了类似

k8s-logs-2026.02.12的索引(这说明 Logstash 已经把数据灌进去了)。 -

在输入框输入

k8s-logs-*,点击 Next。 -

Time field 选择

@timestamp,点击 Create。

-

-

查看日志:

-

点击左侧菜单 "Discover"。

-

现在你可以看到整个 Kubernetes 集群所有容器的实时日志了!

-

你可以在搜索框输入

kubernetes.container.name : "ruoyi-admin"来只看若依后端的日志。

-

然后用ingress也给日志端添加域名

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: kibana-ingress

namespace: logging # 【注意】必须对应命名空间

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: kibana.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kibana # logging 下的 service 名字

port:

number: 5601

访问正常

搭建 Jenkins 实现自动化部署(CI/CD)

第一步:创建命名空间和权限

apiVersion: v1

kind: Namespace

metadata:

name: devops

---

# 1. 创建服务账号

apiVersion: v1

kind: ServiceAccount

metadata:

name: jenkins

namespace: devops

---

# 2. 创建角色(赋予它管理 Pod 的超级权限)

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: jenkins-admin

rules:

- apiGroups: [""]

resources: ["pods", "pods/exec", "pods/log", "secrets", "configmaps", "services", "persistentvolumeclaims"]

verbs: ["get", "list", "watch", "create", "delete", "update"]

- apiGroups: ["apps"]

resources: ["deployments", "statefulsets"]

verbs: ["get", "list", "watch", "create", "delete", "update", "patch"]

---

# 3. 绑定角色

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: jenkins-admin

subjects:

- kind: ServiceAccount

name: jenkins

namespace: devops

roleRef:

kind: ClusterRole

name: jenkins-admin

apiGroup: rbac.authorization.k8s.io

第二步:创建存储

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: jenkins-home

namespace: devops

spec:

accessModes:

- ReadWriteOnce

storageClassName: managed-nfs-storage

resources:

requests:

storage: 10Gi

第三步:部署 Jenkins

apiVersion: apps/v1

kind: Deployment

metadata:

name: jenkins

namespace: devops

spec:

replicas: 1

selector:

matchLabels:

app: jenkins

template:

metadata:

labels:

app: jenkins

spec:

nodeName: k8s-node2

serviceAccountName: jenkins

# ▼▼▼ 1. 全局只保留 fsGroup (让存储支持组权限),去掉 runAsUser ▼▼▼

securityContext:

fsGroup: 1000

initContainers:

- name: fix-permissions

image: busybox

imagePullPolicy: IfNotPresent

# ▼▼▼ 2. 关键修改:强制 Init 容器以 Root (0) 身份运行 ▼▼▼

securityContext:

runAsUser: 0

command: ["sh", "-c", "chown -R 1000:1000 /var/jenkins_home"]

volumeMounts:

- name: jenkins-home

mountPath: /var/jenkins_home

containers:

- name: jenkins

image: jenkins/jenkins:lts

imagePullPolicy: IfNotPresent

# ▼▼▼ 3. 将 runAsUser: 1000 移到这里,只约束 Jenkins 主容器 ▼▼▼

securityContext:

runAsUser: 1000

env:

- name: JAVA_OPTS

value: "-Xmx1024m -Xms512m -Duser.timezone=Asia/Shanghai"

- name: JENKINS_UC

value: "https://mirrors.huaweicloud.com/jenkins/updates/update-center.json"

- name: JENKINS_UC_DOWNLOAD

value: "https://mirrors.huaweicloud.com/jenkins"

ports:

- containerPort: 8080

name: http

- containerPort: 50000

name: agent

volumeMounts:

- name: jenkins-home

mountPath: /var/jenkins_home

livenessProbe:

httpGet:

path: /login

port: 8080

initialDelaySeconds: 60

periodSeconds: 20

readinessProbe:

httpGet:

path: /login

port: 8080

initialDelaySeconds: 30

periodSeconds: 20

volumes:

- name: jenkins-home

persistentVolumeClaim:

claimName: jenkins-home

---

apiVersion: v1

kind: Service

metadata:

name: jenkins

namespace: devops

spec:

selector:

app: jenkins

type: ClusterIP

ports:

- name: http

port: 8080

targetPort: 8080

- name: agent

port: 50000

targetPort: 50000

创建ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: jenkins-ingress

namespace: devops # 必须和 Service 在同一个命名空间

annotations:

kubernetes.io/ingress.class: "nginx"

# 上传大文件限制 (上传插件时可能会用到)

nginx.ingress.kubernetes.io/proxy-body-size: "100m"

spec:

rules:

- host: jenkins.local # 你的域名

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: jenkins

port:

number: 8080

依次启动后访问域名

密码可以用下面命令获取,改成你自己的pod名字就行

kubectl logs jenkins-5f75c8bd4b-q78xb -n devops

可能会因为网络问题安装不上,可以跳过

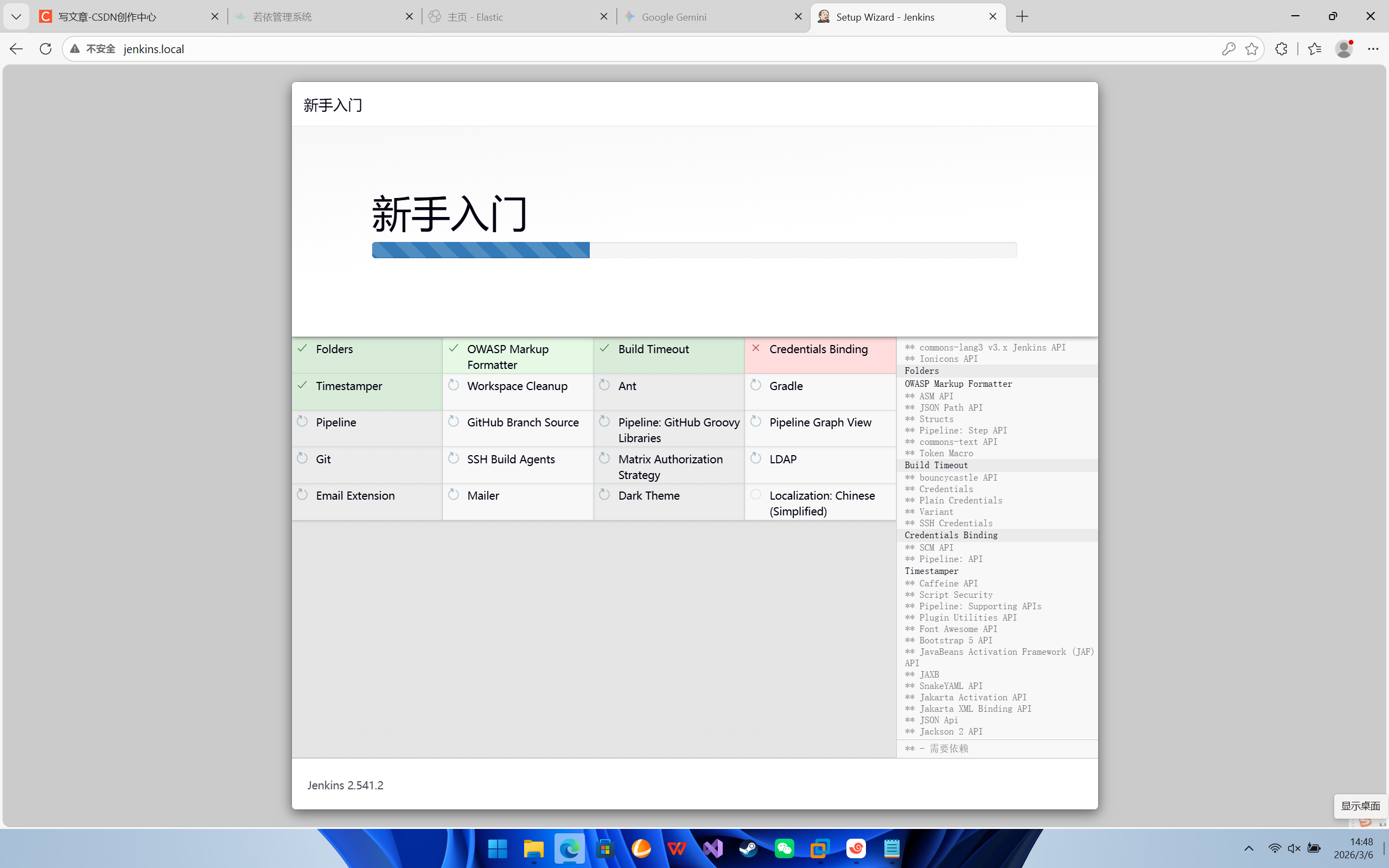

我们需要安装以下插件

Kubernetes

Docker

Docker Pipeline

Config File Provider

Git

GitLab (如果你自己搭了 GitLab)

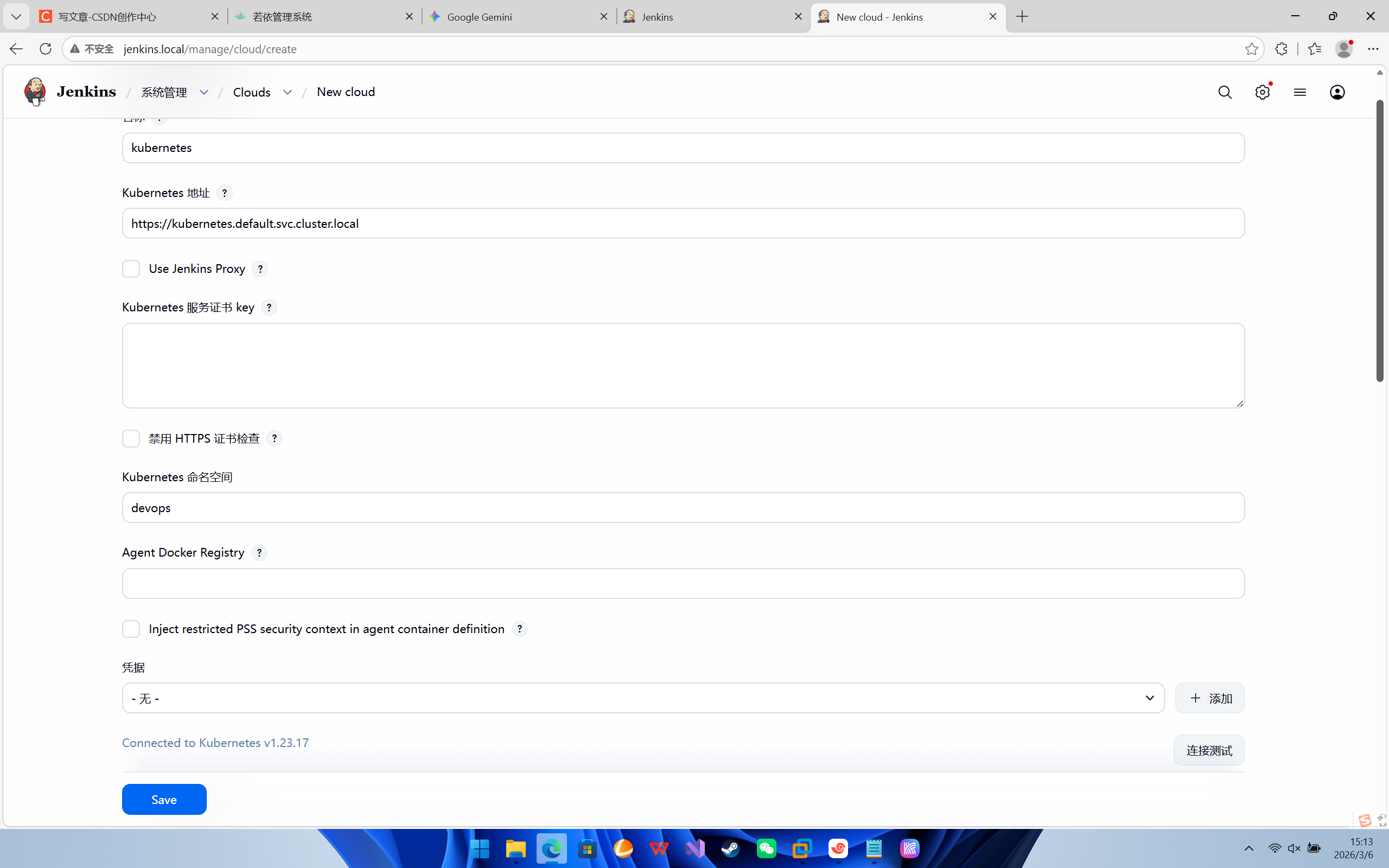

Blue Ocean第一步:配置 Jenkins 连接 Kubernetes 集群

让 Jenkins 拥有在 devops 命名空间下动态创建 Pod 的权力。

-

登录 Jenkins,点击左侧菜单的 Manage Jenkins (系统管理)。

-

在 System Configuration 下找到 Clouds ,点击进入。

-

然后点击 + New cloud (新建云)。

-

Name 输入:

kubernetes。Type 选择Kubernetes,点击 Create。 -

展开 Kubernetes Cloud details,按以下关键信息填写:

-

Kubernetes URL:

https://kubernetes.default.svc.cluster.local(这是 K8s 集群内部 API 地址,固定写法)。 -

Kubernetes Namespace:

devops(告诉 Jenkins 把小弟生在哪个产房)。 -

点击 Test Connection (测试连接)。如果出现

Connected to Kubernetes v1.xx.x,说明连接成功! -

Jenkins URL:

http://jenkins.devops.svc.cluster.local:8080(告诉小弟去哪里找大哥报到)。

-

-

点击底部的 Save 保存。

创建流水线

-

回到 Jenkins 首页,点击左上角的 + New Item (新建任务)。

-

输入任务名称,比如

01-K8s-Agent-Test。 -

选择 Pipeline (流水线),点击 OK。

-

直接滚动到最底部的 Pipeline 区域。

-

在 Script 输入框中,复制粘贴以下代码

pipeline {

agent {

kubernetes {

yaml '''

apiVersion: v1

kind: Pod

spec:

containers:

- name: jnlp

image: jenkins/inbound-agent:alpine

tty: true

- name: maven

image: maven:3.8.6-openjdk-8

command: ['cat']

tty: true

'''

}

}

stages {

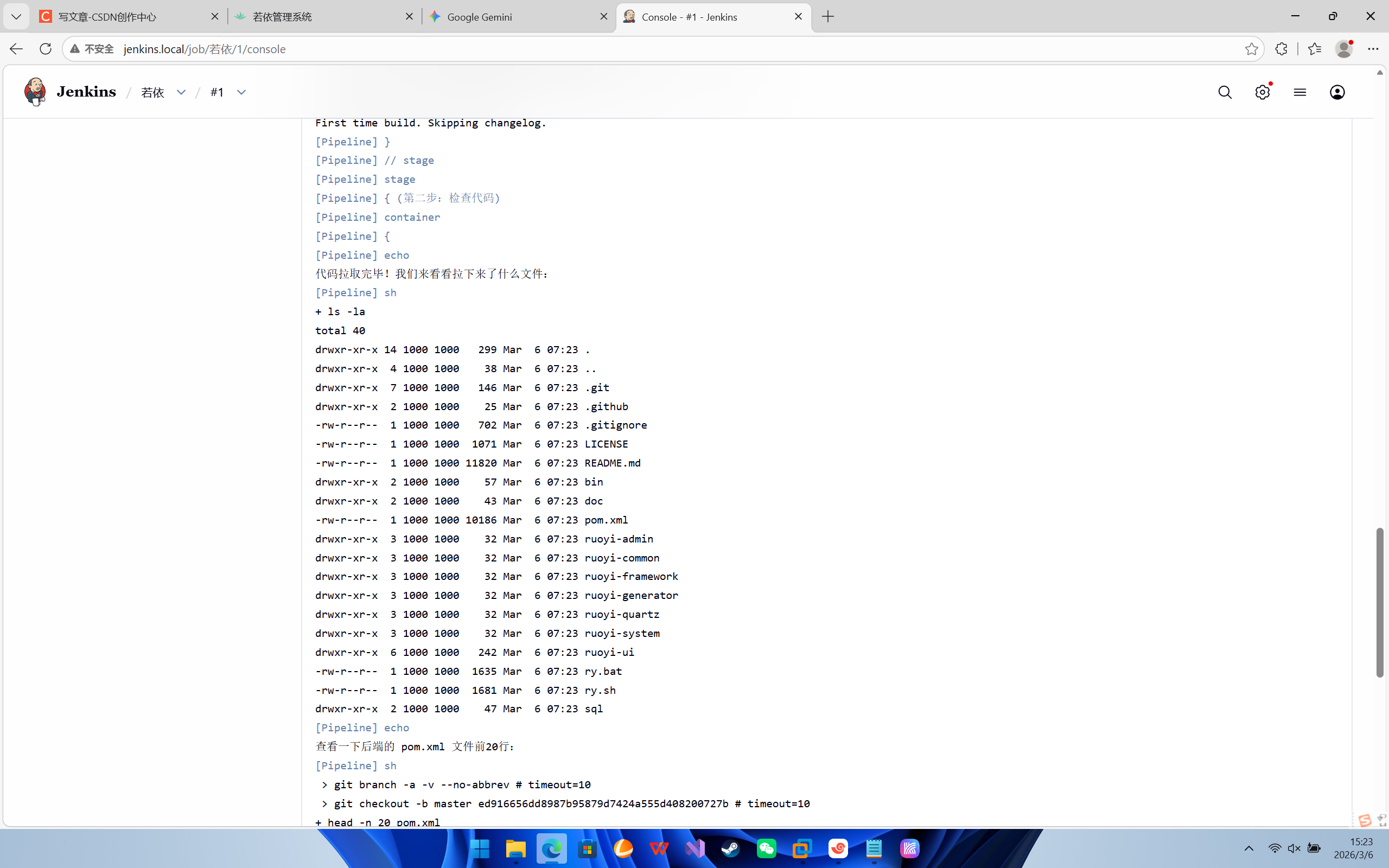

stage('第一步:拉取代码') {

steps {

// 默认在主容器里执行 Git clone

echo "开始从 Gitee 拉取若依官方代码..."

// 这里使用的是公开仓库,不需要配置账号密码

git branch: 'master', url: 'https://gitee.com/y_project/RuoYi-Vue.git'

}

}

stage('第二步:检查代码') {

steps {

container('maven') {

echo "代码拉取完毕!我们来看看拉下来了什么文件:"

sh 'ls -la'

echo "查看一下后端的 pom.xml 文件前20行:"

sh 'head -n 20 pom.xml'

}

}

}

}

}-

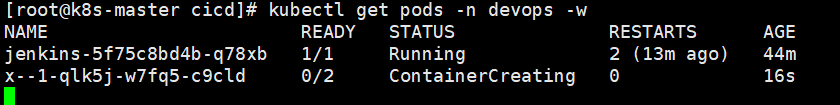

在任务详情页,点击左侧的 Build Now (立即构建)。

-

你会看到左下角的 Build History 出现了一个

#1,并且在闪烁。 -

这时候你如果去服务器后台执行

kubectl get pods -n devops -w,你会神奇地发现:-

K8s 里突然自动冒出了一个新的 Pod(名字类似

01-k8s-agent-test-1-xxxx)。 -

等这个临时 Pod 变成 Running,Jenkins 就会把命令派发给它执行。

-

执行完毕后,这个临时 Pod 会被自动销毁,一点垃圾都不留!

-

-

在 Jenkins 里点击那个

#1,然后点击 Console Output (控制台输出),看看是不是成功打印出了 Java 和 Maven 的版本号

成功了

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)