基于OpenTelemetry+Prometheus+Grafana+Loki+Tempo+Minio可观测性平台搭建(企业级)

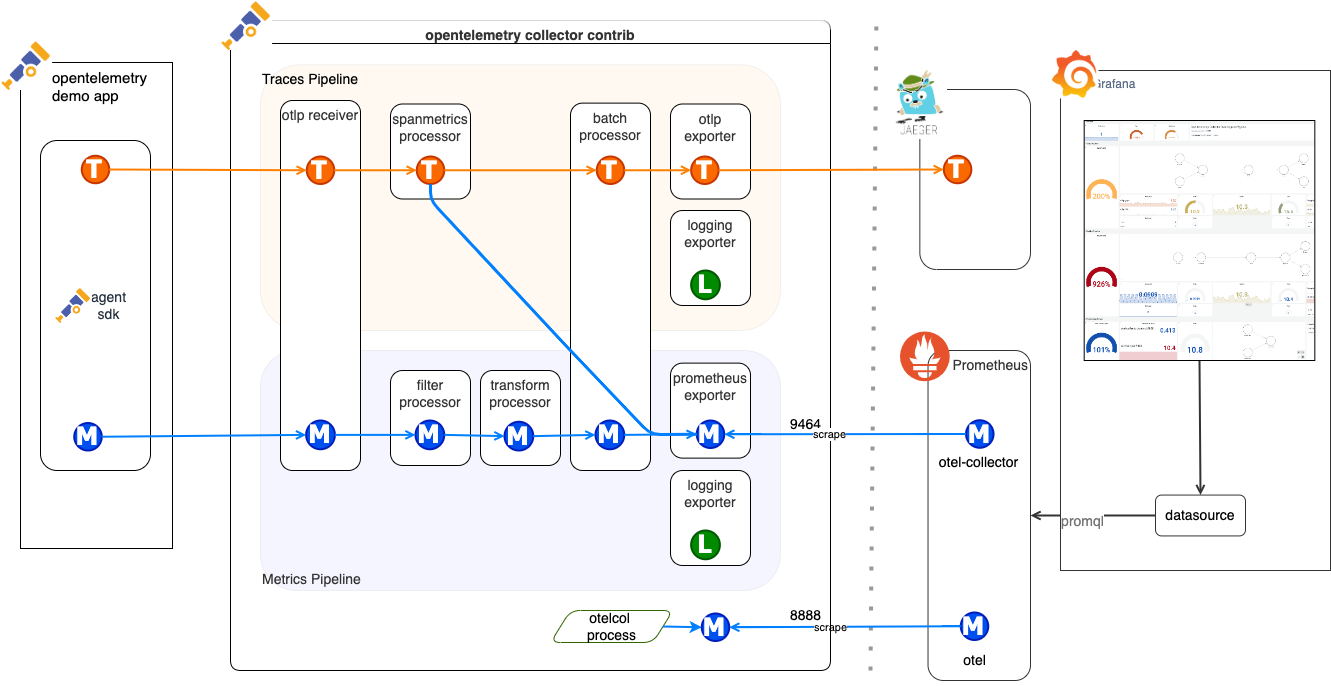

本文介绍了一套基于OpenTelemetry+Prometheus+Loki+Tempo+Grafana的云原生可观测性解决方案。该方案通过OpenTelemetry统一采集应用日志、指标和追踪数据,Prometheus负责指标存储,Loki处理日志聚合,Tempo管理分布式追踪数据,最后由Grafana提供统一可视化分析平台。文章详细说明了各组件的功能定位、系统架构设计、具体部署步骤以及数据采集

方案介绍

OpenTelemetry +Prometheus+ Loki + Tempo + Grafana 是一套现代化、云原生的可观测性解决方案组合,涵盖 Trace 链路追踪、Log 日志、Metrics指标 三大核心维度,为微服务架构中的应用提供统一的可观测性平台。

组件介绍

| 组件 | 作用 | 说明 |

|---|---|---|

| OpenTelemetry | 全面采集 | 应用侧统一采集日志、指标、追踪数据,支持多语言 SDK。 |

| Prometheus | 指标采集与存储 | 主动拉取应用和系统的监控指标,配合 Alertmanager 进行告警。 |

| Loki | 日志聚合存储 | 结构化日志存储,与 traceID 打通,轻量级类似 Prometheus 的日志系统。 |

| Tempo | 分布式追踪存储 | 收集 trace 信息,存储于对象存储(如 MinIO/S3)中,便于分析慢请求、链路瓶颈。 |

| Grafana | 可视化分析平台 | 将三种数据统一呈现,支持联动跳转(如日志 ⇄ 链路),支持告警、仪表盘、探索分析等功能。 |

| Minio | 对象存储服务 | MinIO是一个高性能的开源对象存储服务,兼容Amazon S3接口,适合存储大规模非结构化数据,如图片、视频和日志文件。可用于存储指标、日志、链路追踪数据。 |

系统架构

部署相关组件

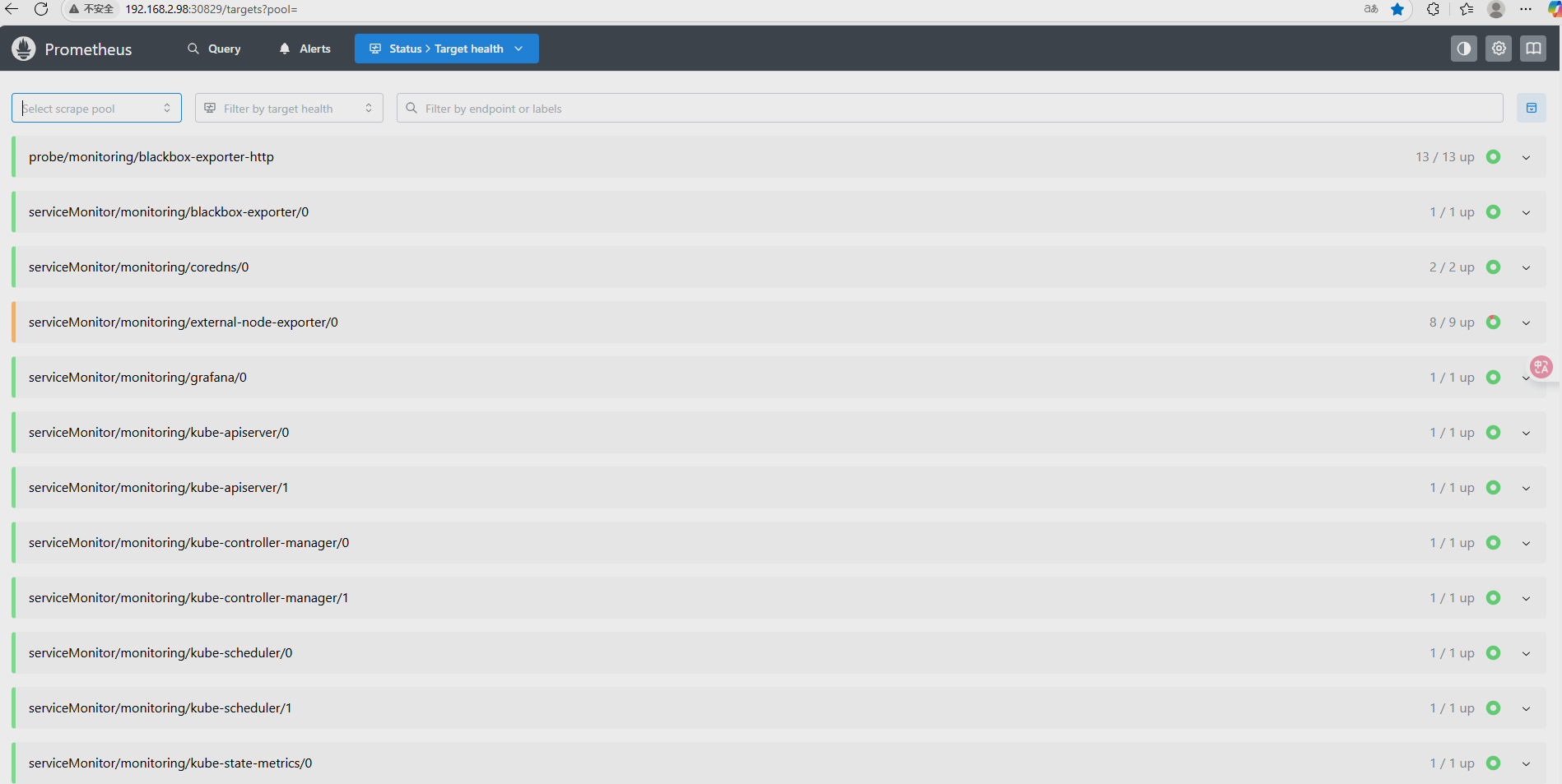

部署 Prometheus (项目地址)

组件说明

| MetricServer |

是kubernetes集群资源使用情况的聚合器,收集数据给kubernetes集群内使用, 如kubectl,hpa,scheduler等。 |

| PrometheusOperator | 是一个系统监测和警报工具箱,用来存储监控数据 |

| NodeExporte | 用于各node的关键度量指标状态数据 |

| KubeStateMetrics | 收集kubernetes集群内资源对象数据,制定告警规则 |

| Prometheus |

采用pull方式收集apiserver,scheduler,controller-manager,kubelet组件数据, 通过http协议传输。 |

| Grafana | 是可视化数据统计和监控平台 |

克隆项目到本地

git clone https://github.com/prometheus-operator/kube-prometheus.git 安装部署Prometheus

[root@devops-master ~]# cd kube-prometheus/

[root@devops-master kube-prometheus]# cd manifests/

[root@devops-master manifests]# cd setup/

[root@devops-master setup]# kubectl create -f .

[root@devops-master setup]# cd ..

[root@devops-master manifests]# kubectl create -f .

查看Pod部署情况

[root@devops-master manifests]# kubectl get pod -n monitoring

NAME READY STATUS RESTARTS AGE

blackbox-exporter-5bfcbd6c57-kpc9r 3/3 Running 0 58d

grafana-8948d455f-5nfd2 1/1 Running 0 2d

kube-state-metrics-6cd858658b-h62zj 3/3 Running 0 58d

node-exporter-hlbr8 2/2 Running 0 54d

node-exporter-n8v4f 2/2 Running 0 54d

node-exporter-sfbd5 2/2 Running 2 (13d ago) 13d

node-exporter-zqsbs 2/2 Running 0 54d

prometheus-adapter-965fccd76-d57m4 1/1 Running 0 35d

prometheus-adapter-965fccd76-jl8ps 1/1 Running 0 35d

prometheus-k8s-0 2/2 Running 0 2d19h

prometheus-k8s-1 2/2 Running 0 2d19h

prometheus-operator-8b588bff8-gzwkp 2/2 Running 0 35dPs:镜像相关问题可以自行替换,数据持久化、其他得不做过多赘述

(渡渡鸟镜像替换地址)![]() https://docker.aityp.com/

https://docker.aityp.com/

访问示例

Prometheus开启远程写入

[root@devops-master manifests]# kubectl get prometheuses.monitoring.coreos.com -n monitoring

NAME VERSION DESIRED READY RECONCILED AVAILABLE AGE

k8s 3.3.1 2 2 True True 34d

[root@devops-master manifests]# kubectl edit prometheuses.monitoring.coreos.com k8s -n monitoring

###

spec:

additionalArgs: # 添加prometheus启动命令

- name: web.enable-admin-api # 开启admin-api权限。自主开启

value: ""

- name: web.enable-remote-write-receiver # 开启远程写入

value: ""

alerting:

alertmanagers:

- apiVersion: v2

name: alertmanager-main

namespace: monitoring

port: web

enableFeatures:

- remote-write-receiver # 开启远程写入

# 查看验证是否开启

[root@devops-master manifests]# kubectl get pod -n monitoring prometheus-k8s-0 -o yaml | grep remote-write-receiver

- --enable-feature=remote-write-receiver

- --web.enable-remote-write-receiver

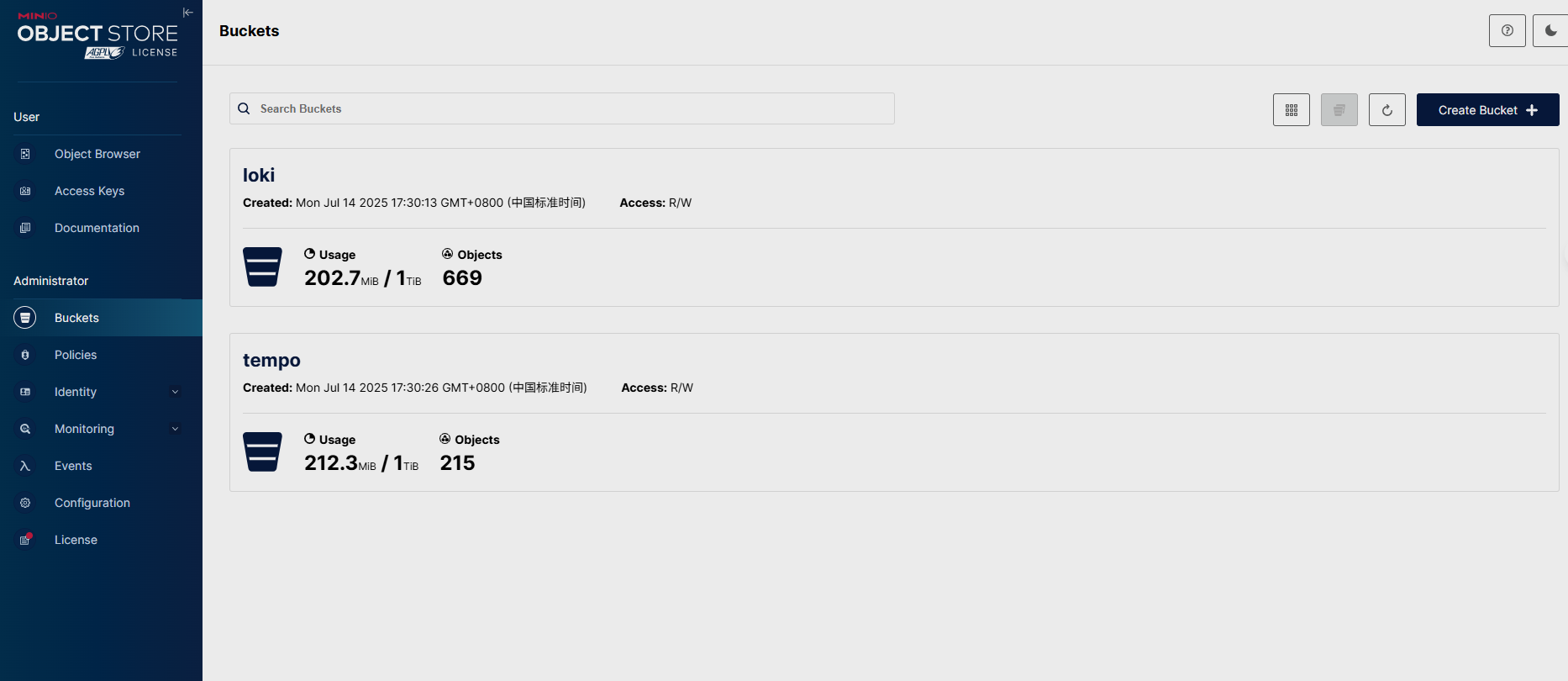

Minio部署

具体文件可参考MinIO对象存储 Kubernetes — MinIO中文文档 | MinIO Kubernetes中文文档

[root@devops-master minio]# cat minio.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: minio-pvc

namespace: minio

spec:

storageClassName: nfs-storage

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 50Gi

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: minio

name: minio

namespace: minio

spec:

selector:

matchLabels:

app: minio

template:

metadata:

labels:

app: minio

spec:

containers:

- name: minio

image: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/quay.io/minio/minio:RELEASE.2025-04-08T15-41-24Z

command:

- /bin/bash

- -c

args:

- minio server /data --console-address :9090

volumeMounts:

- mountPath: /data

name: data

ports:

- containerPort: 9090

name: console

- containerPort: 9000

name: api

env:

- name: MINIO_ROOT_USER # 指定用户名

value: "admin"

- name: MINIO_ROOT_PASSWORD # 指定密码,最少8位置

value: "minioadmin"

volumes:

- name: data

persistentVolumeClaim:

claimName: minio-pvc

---

apiVersion: v1

kind: Service

metadata:

name: minio-service

namespace: minio

spec:

type: NodePort

selector:

app: minio

ports:

- name: console

port: 9090

protocol: TCP

targetPort: 9090

nodePort: 30333

- name: api

port: 9000

protocol: TCP

targetPort: 9000

nodePort: 30222

创建tempo、Loki两个桶

OpenTelemetry部署

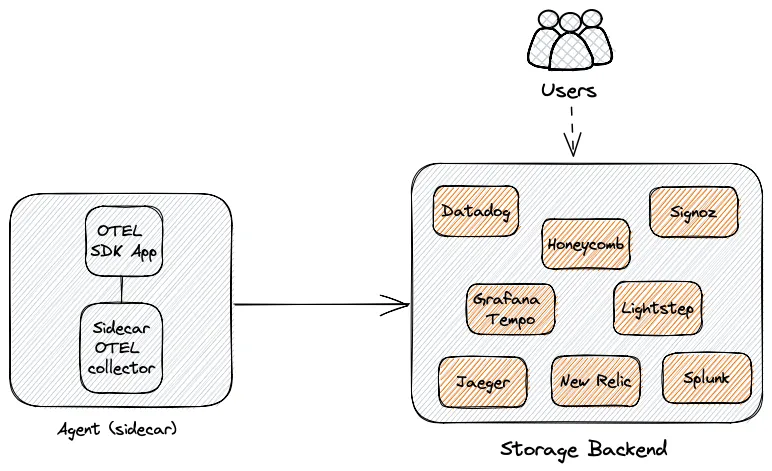

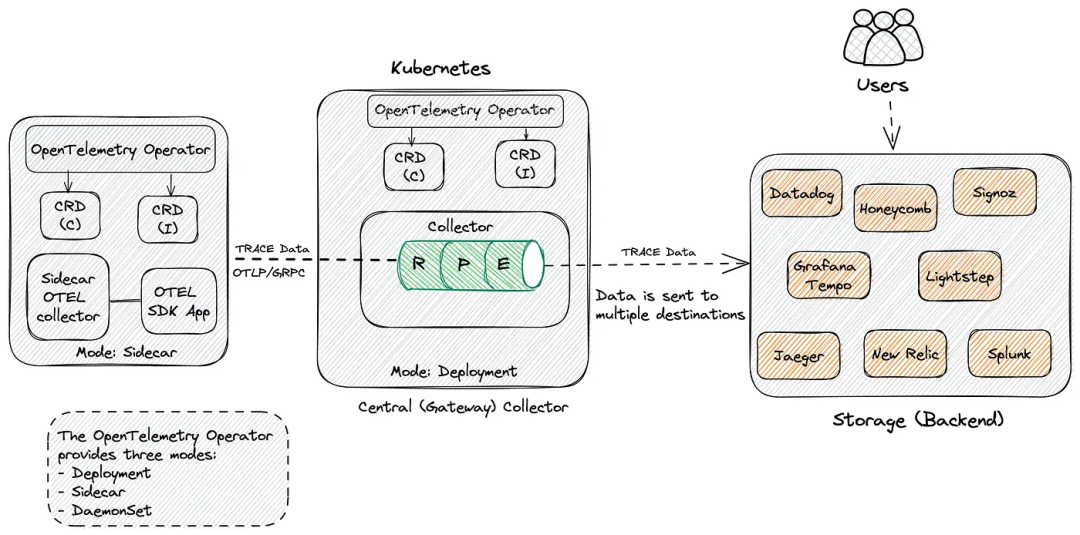

OpenTelemetry Collector 按部署方式分为 Agent 和Gateway 模式。

Agent 模式

在 Agent 模式下,OpenTelemetry 检测的应用程序将数据发送到与应用程序一起驻留的(收集器)代理。然后,该代理程序将接管并处理所有来自应用程序的追踪数据。收集器可以通过 sidecar 方式部署为代理,sidecar 可以配置为直接将数据发送到存储后端。

Gateway 模式

Gateway 模式则是将数据发送到另一个 OpenTelemetry 收集器,然后从(中心)收集器进一步将数据发送到存储后端。在这种配置中,我们有一个中心的 OpenTelemetry 收集器,它使用 deployment/statefulset/daemonset 模式部署,具有许多优势,如自动扩展。

部署OpenTelemetry

建议使用 OpenTelemetry Operator 来部署,因为它可以帮助我们轻松部署和管理 OpenTelemetry 收集器,还可以自动检测应用程序。具体可参考文档OpenTelemetry Operator for Kubernetes | OpenTelemetryAn implementation of a Kubernetes Operator, that manages collectors and auto-instrumentation of the workload using OpenTelemetry instrumentation libraries.![]() https://opentelemetry.io/docs/platforms/kubernetes/operator/

https://opentelemetry.io/docs/platforms/kubernetes/operator/

部署cert-manager

因为 Operator 使用了 Admission Webhook 通过 HTTP 回调机制对资源进行校验/修改。Kubernetes 要求 Webhook 服务必须使用 TLS,因此 Operator 需要为其 webhook server 签发证书,所以需要先安装cert-manager。

# wget https://github.com/cert-manager/cert-manager/releases/latest/download/cert-manager.yaml

# kubectl apply -f cert-manager.yaml

[root@devops-master manifests]# kubectl get pod -n cert-manager

NAME READY STATUS RESTARTS AGE

cert-manager-5c4c74cb68-x9npj 1/1 Running 1 (18d ago) 18d

cert-manager-cainjector-569cc955ff-x9bqj 1/1 Running 2 (18d ago) 18d

cert-manager-webhook-6dbfdbc658-kq46j 1/1 Running 0 18d

部署Operator

在 Kubernetes 上使用 OpenTelemetry,主要就是部署 OpenTelemetry 收集器。

[root@devops-master manifests]# wget https://github.com/open-telemetry/opentelemetry-operator/releases/latest/download/opentelemetry-operator.yaml

[root@devops-master manifests]# kubectl apply -f opentelemetry-operator.yaml

[root@devops-master manifests]# kubectl get pod -n opentelemetry-operator-system

NAME READY STATUS RESTARTS AGE

opentelemetry-operator-controller-manager-96c69b9dd-kdrfx 2/2 Running 0 18d

[root@devops-master manifests]# kubectl get crd |grep opentelemetry

instrumentations.opentelemetry.io 2025-06-28T07:22:26Z

opampbridges.opentelemetry.io 2025-06-28T07:22:26Z

opentelemetrycollectors.opentelemetry.io 2025-06-28T07:26:04Z

targetallocators.opentelemetry.io 2025-06-28T07:22:27Z

部署Collector(中心)

接下来我们部署一个精简版的 OpenTelemetry Collector,用于接收 OTLP 格式的 trace 数据,通过 gRPC 或 HTTP 协议接入,经过内存控制与批处理后,打印到日志中以供调试使用。

[root@devops-master OpenTelemetry]# cat center-collector.yaml

apiVersion: opentelemetry.io/v1beta1

kind: OpenTelemetryCollector

metadata:

name: center

namespace: opentelemetry

spec:

replicas: 1

config:

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch: {}

exporters:

debug:

verbosity: detailed # 用于调试日志

insecure: true

otlp/jaeger:

endpoint: "http://192.168.0.89:31183" #jaeger

tls:

insecure: true

prometheus:

endpoint: "0.0.0.0:8889" # Prometheus 端点

send_timestamps: true # 包含时间戳

service:

telemetry:

logs:

level: "debug"

pipelines:

traces:

receivers: [otlp]

processors: [batch]

exporters: [debug, otlp, otlp/jaeger]

metrics:

receivers: [otlp]

processors: [batch]

exporters: [debug, prometheus]

logs:

receivers: [otlp]

processors: [batch]

exporters: [otlp]

应用埋点(Instrumentation)

什么是埋点

埋点,本质就是在你的应用程序里,在重要位置插入采集代码,比如:

- 收集请求开始和结束的时间

- 收集数据库查询时间

- 收集函数调用链路信息

- 收集异常信息

这些埋点数据(Trace、Metrics、Logs)被收集起来后,可以在监控平台看到系统运行时的真实表现,帮助你做:

- 性能分析

- 故障排查

- 调用链路追踪

简单说就是:“在合适的地方插追踪/监控代码”。

要使用 OpenTelemetry 检测应用程序,可以前往访问 OpenTelemetry 存储库,选择适用于的应用程序的语言,然后按照说明进行操作。具体可以参考文档:开发者入门指南 | OpenTelemetry 中文文档

自动埋点

使用自动埋点是一个很好的方式,因为它简单、容易,不需要进行很多代码更改,如果你没有必要的知识(或时间)来创建适合你应用程序量身的追踪代码,那么这种方法就非常合适。

OpenTelemetry 支持自动化埋点的语言:

- .net

- Java

- JavaScript

- PHP

- Python

埋点方式对比

| 手动埋点(Manual Instrumentation) | 自动埋点(Automatic Instrumentation) | |

|---|---|---|

| 定义 | 程序员自己在代码里显式写下采集逻辑 | 借助 SDK/Agent 自动拦截应用,无需修改业务代码 |

| 实现方式 | 引用 OpenTelemetry API,比如创建 Tracer,手动打 span |

安装一个 Agent(Java agent、Python instrumentation)自动检测框架和库,插入追踪 |

| 控制力度 | 非常高,想怎么打点都可以 | 较低,受限于 Agent 支持的范围 |

| 开发成本 | 高,需要自己判断哪里要加埋点 | 低,几乎开箱即用 |

| 支持范围 | 业务逻辑细粒度打点,比如特定函数、算法内部 | 框架级打点,比如 HTTP 请求、数据库访问、消息队列消费 |

| 性能影响 | 可控,看你打点多少 | 可能稍高,因为 Agent 会 Hook 很多地方 |

| 典型场景 | 需要追踪复杂业务逻辑 | 快速上线链路追踪,不想改代码 |

k8s 应用自动埋点步骤

- 部署 OpenTelemetry Operator:它帮你管理

Instrumentation和OpenTelemetryCollector,实现自动注入、自动采集功能。 - 部署 OpenTelemetryCollector:用来接收自动埋点产生的数据,比如 traces。

- 定义 Instrumentation 对象:声明“我想要给哪些应用自动打点”(比如 Java 的 agent),并指定用哪个

Collector。 - 给你的 Pod 加上 Annotation:Operator 会根据 Annotation 自动注入 Agent 和 Sidecar。

[root@devops-master OpenTelemetry]# kubectl apply -f java-Instrumentation.yaml

[root@devops-master OpenTelemetry]# cat java-Instrumentation.yaml

apiVersion: opentelemetry.io/v1alpha1

kind: Instrumentation

metadata:

name: java-instrumentation # name

namespace: opentelemetry

spec:

propagators:

- tracecontext

- baggage

- b3

sampler:

type: always_on

java:

image: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/ghcr.io/open-telemetry/opentelemetry-operator/autoinstrumentation-java:2.15.0

env:

- name: OTEL_EXPORTER_OTLP_ENDPOINT

value: http://center-collector.opentelemetry.svc:4318

- name: OTEL_EXPORTER_OTLP_PROTOCOL

value: http/protobuf

- name: OTEL_EXPORTER_OTLP_METRICS_ENDPOINT

value: http://center-collector.opentelemetry.svc:4318/v1/metrics

- name: OTEL_LOG_LEVEL

value: debug

resources:

limits:

cpu: "200m"

memory: "412Mi"

requests:

cpu: "100m"

memory: "256Mi"

自动注入java应用示例

kind: Deployment

apiVersion: apps/v1

metadata:

name: java-test

namespace: test

labels:

app: java-test

spec:

replicas: 1

selector:

matchLabels:

app: java-test

template:

metadata:

creationTimestamp: null

labels:

app: java-test

annotations:

instrumentation.opentelemetry.io/inject-java: "opentelemetry/java-instrumentation"

sidecar.opentelemetry.io/inject: "opentelemetry/sidecar" # 注入sidecar

spec:

volumes:

- name: host-time

hostPath:

path: /etc/localtime

type: ''

containers:

- name: java-test

image: java-test:v1

ports:

- name: java-test

containerPort: 30105

protocol: TCP

env:

resources: {}

volumeMounts:

- name: host-time

readOnly: true

mountPath: /etc/localtime

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

imagePullPolicy: Always

restartPolicy: Always

terminationGracePeriodSeconds: 30

dnsPolicy: ClusterFirst

serviceAccountName: default

serviceAccount: default

securityContext: {}

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 0 # 不允许额外的 Pod 启动

maxUnavailable: 1 # 允许最多一个 Pod 不可用

revisionHistoryLimit: 10

progressDeadlineSeconds: 600

查看自动注入得Pod

[root@devops-master OpenTelemetry]# kubectl get pod -n prod

java-test-b65445b4-njm2t 2/2 Running 0 13h

[root@devops-master OpenTelemetry]# kubectl get opentelemetrycollectors -A

NAMESPACE NAME MODE VERSION READY AGE IMAGE MANAGEMENT

opentelemetry center deployment 0.127.0 1/1 47h ghcr.io/open-telemetry/opentelemetry-collector-releases/opentelemetry-collector:0.127.0 managed

opentelemetry sidecar sidecar 0.127.0 14d managed

[root@devops-master OpenTelemetry]# kubectl get instrumentations -A

NAMESPACE NAME AGE ENDPOINT SAMPLER SAMPLER ARG

opentelemetry java-instrumentation 14d always_on

查看 sidecar日志,已正常启动并发送 spans 数据

[root@devops-master OpenTelemetry]# kubectl logs java-test-b65445b4-njm2t -c otc-container -n prod

2025-07-16T14:07:56.713Z info service@v0.127.0/service.go:199 Setting up own telemetry... {"resource": {}}

2025-07-16T14:07:56.714Z info builders/builders.go:26 Development component. May change in the future. {"resource": {}, "otelcol.component.id": "debug", "otelcol.component.kind": "exporter", "otelcol.signal": "traces"}

2025-07-16T14:07:56.714Z debug builders/builders.go:24 Stable component. {"resource": {}, "otelcol.component.id": "otlp", "otelcol.component.kind": "exporter", "otelcol.signal": "traces"}

2025-07-16T14:07:56.714Z debug builders/builders.go:24 Beta component. May change in the future. {"resource": {}, "otelcol.component.id": "batch", "otelcol.component.kind": "processor", "otelcol.pipeline.id": "traces", "otelcol.signal": "traces"}

2025-07-16T14:07:56.714Z debug builders/builders.go:24 Stable component. {"resource": {}, "otelcol.component.id": "otlp", "otelcol.component.kind": "receiver", "otelcol.signal": "traces"}

2025-07-16T14:07:56.714Z debug otlpreceiver@v0.127.0/otlp.go:58 created signal-agnostic logger {"resource": {}, "otelcol.component.id": "otlp", "otelcol.component.kind": "receiver"}

2025-07-16T14:07:56.715Z info service@v0.127.0/service.go:266 Starting otelcol... {"resource": {}, "Version": "0.127.0", "NumCPU": 8}

2025-07-16T14:07:56.715Z info extensions/extensions.go:41 Starting extensions... {"resource": {}}

2025-07-16T14:07:56.715Z info grpc@v1.72.1/clientconn.go:176 [core] original dial target is: "10.108.209.58:4317" {"resource": {}, "grpc_log": true}

2025-07-16T14:07:56.715Z info grpc@v1.72.1/clientconn.go:459 [core] [Channel #1]Channel created {"resource": {}, "grpc_log": true}

2025-07-16T14:07:56.715Z info grpc@v1.72.1/clientconn.go:207 [core] [Channel #1]parsed dial target is: resolver.Target{URL:url.URL{Scheme:"passthrough", Opaque:"", User:(*url.Userinfo)(nil), Host:"", Path:"/10.108.209.58:4317", RawPath:"", OmitHost:false, ForceQuery:false, RawQuery:"", Fragment:"", RawFragment:""}} {"resource": {}, "grpc_log": true}

2025-07-16T14:07:56.715Z info grpc@v1.72.1/clientconn.go:208 [core] [Channel #1]Channel authority set to "10.108.209.58:4317" {"resource": {}, "grpc_log": true}

2025-07-16T14:07:56.716Z info grpc@v1.72.1/resolver_wrapper.go:210 [core] [Channel #1]Resolver state updated: {

"Addresses": [

{

"Addr": "10.108.209.58:4317",

"ServerName": "",

"Attributes": null,

"BalancerAttributes": null,

"Metadata": null

}

],

"Endpoints": [

{

"Addresses": [

{

"Addr": "10.108.209.58:4317",

"ServerName": "",

"Attributes": null,

"BalancerAttributes": null,

"Metadata": null

}

],

"Attributes": null

}

],

"ServiceConfig": null,

"Attributes": null

数据收集(Collector)

OpenTelemetry 的 Collector 组件是实现观测数据(Trace、Metrics、Logs)收集、处理和导出的一站式服务。它的配置主要分为以下 四大核心模块:

- receivers(接收数据)

- processors(数据处理)

- exporters(导出数据)

- service(工作流程)

收集器配置详解

[root@devops-master OpenTelemetry]# cat sidecar-collector.yaml

apiVersion: opentelemetry.io/v1beta1

kind: OpenTelemetryCollector # 定义资源类型为 OpenTelemetryCollector

metadata:

name: sidecar # Collector 的名称

namespace: opentelemetry

spec:

mode: sidecar # 以 sidecar 模式运行(与应用容器同 Pod)

config: # Collector 配置部分(结构化 YAML)

receivers:

otlp: # 使用 OTLP 协议作为接收器

protocols:

grpc:

endpoint: 0.0.0.0:4317 # 启用 gRPC 协议

http:

endpoint: 0.0.0.0:4318 # 启用 HTTP 协议

processors:

batch: {} # 使用 batch 处理器将数据批量发送,提高性能

exporters:

debug: {} # 将数据输出到 stdout 日志(用于调试)

otlp: # 添加一个 OTLP 类型导出器,发送到 central collector

endpoint: "10.108.209.58:4317" # 替换为 central collector 的地址

tls:

insecure: true # 不使用 TLS

service:

telemetry:

logs:

level: "debug" # 设置 Collector 自身日志等级为 debug(方便观察日志)

pipelines:

traces: # 定义 trace 数据处理流水线

receivers: [otlp] # 从 otlp 接收 trace 数据

processors: [batch] # 使用批处理器

exporters: [debug, otlp] # 同时导出到 debug(日志)和 otlp(中心 Collector)

具体配置项可参考文档Configuration | OpenTelemetry 这里不做过多赘述

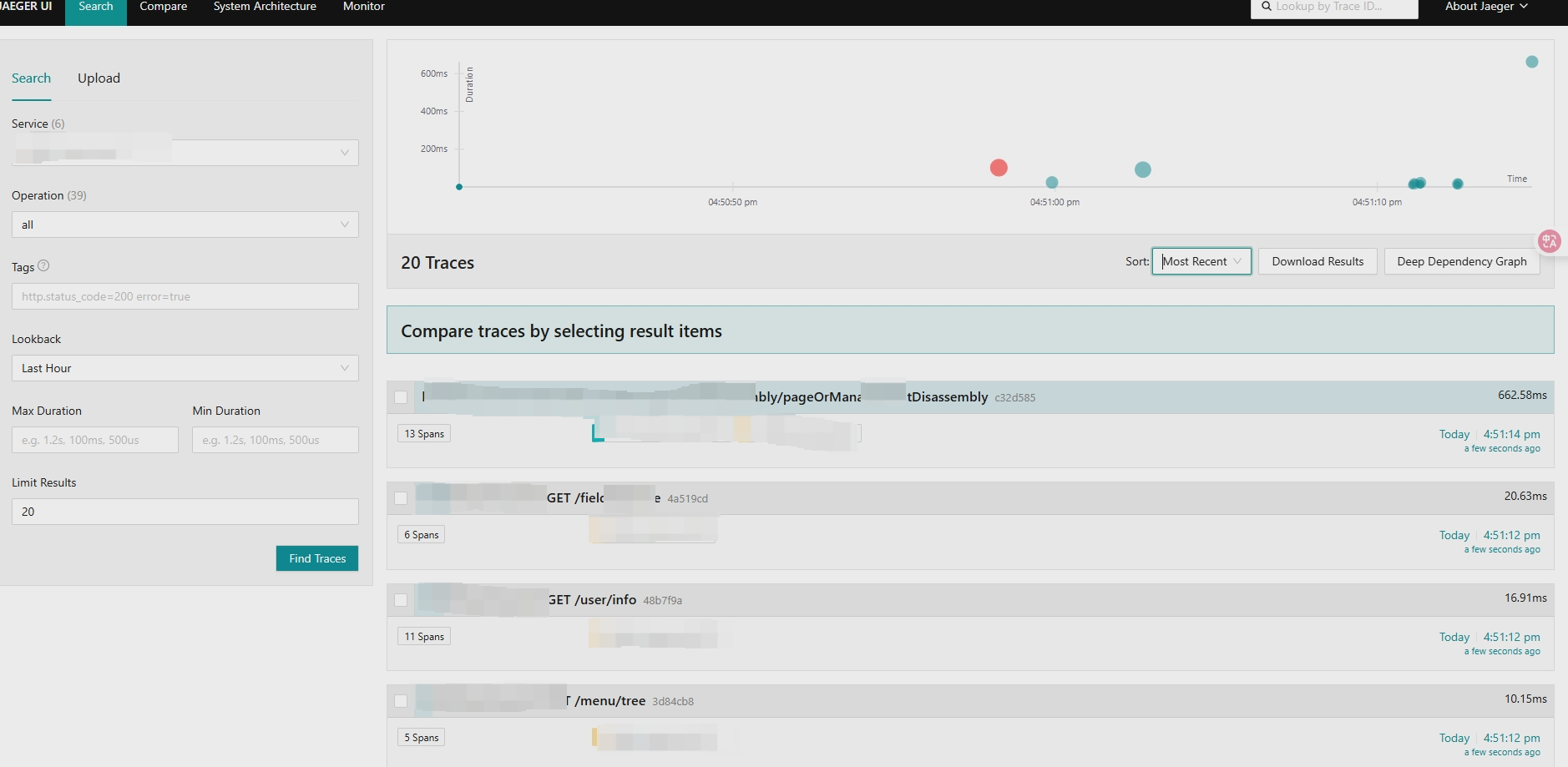

部署Jaeger

Jaeger 介绍

Jaeger 是Uber公司研发,后来贡献给CNCF的一个分布式链路追踪软件,主要用于微服务链路追踪。它优点是性能高(能处理大量追踪数据)、部署灵活(支持单节点和分布式部署)、集成方便(兼容 OpenTelemetry),并且可视化能力强,可以快速定位性能瓶颈和故障。

部署 Jaeger(all in one)

使用 OpenTelemetry Operator 就可以将 Jaeger 部署在 K8s 上,相关文档可以参考:https://github.com/jaegertracing/jaeger-operator?tab=readme-ov-file#using-jaeger-with-in-memory-storage

apiVersion: apps/v1

kind: Deployment

metadata:

name: jaeger

namespace: opentelemetry

labels:

app: jaeger

spec:

replicas: 1

selector:

matchLabels:

app: jaeger

template:

metadata:

labels:

app: jaeger

spec:

containers:

- name: jaeger

image: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/jaegertracing/all-in-one:latest

args:

- "--collector.otlp.enabled=true" # 启用 OTLP gRPC

- "--collector.otlp.grpc.host-port=0.0.0.0:4317"

resources:

limits:

memory: "1Gi"

cpu: "1"

ports:

- containerPort: 6831

protocol: UDP

- containerPort: 16686

protocol: TCP

- containerPort: 4317

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: jaeger

namespace: opentelemetry

labels:

app: jaeger

spec:

selector:

app: jaeger

type: NodePort

ports:

- name: jaeger-udp

port: 6831

targetPort: 6831

protocol: UDP

- name: jaeger-ui

port: 16686

targetPort: 16686

protocol: TCP

- name: otlp-grpc

port: 4317

targetPort: 4317

protocol: TCP

查看pod、svc

[root@devops-master OpenTelemetry]# kubectl get pod -n opentelemetry

NAME READY STATUS RESTARTS AGE

center-collector-7bfdd46946-ff8bm 1/1 Running 0 22d

jaeger-957466498-br2dx 1/1 Running 47 (47m ago) 22d

tempo-0 1/1 Running 0 3d3h

[root@devops-master OpenTelemetry]# kubectl get svc -n opentelemetry

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

center-collector ClusterIP 10.101.75.30 <none> 4317/TCP,4318/TCP,8889/TCP 24d

center-collector-headless ClusterIP None <none> 4317/TCP,4318/TCP,8889/TCP 24d

center-collector-monitoring ClusterIP 10.103.86.196 <none> 8888/TCP 24d

jaeger NodePort 10.98.236.160 <none> 6831:31300/UDP,16686:30382/TCP,4317:31183/TCP 22d

sidecar-collector ClusterIP 10.108.153.75 <none> 4317/TCP,4318/TCP 24d

sidecar-collector-headless ClusterIP None <none> 4317/TCP,4318/TCP 24d

sidecar-collector-monitoring ClusterIP 10.106.11.171 <none> 8888/TCP 24d

tempo NodePort 10.96.15.48 <none> 6831:30127/UDP,6832:30357/UDP,3200:30568/TCP,14268:32263/TCP,14250:32187/TCP,9411:32636/TCP,55680:30342/TCP,55681:31572/TCP,4317:32209/TCP,4318:30289/TCP,55678:32120/TCP 8d

配置Collector

[root@devops-master OpenTelemetry]# cat center-collector.yaml

apiVersion: opentelemetry.io/v1beta1

kind: OpenTelemetryCollector

metadata:

name: center

namespace: opentelemetry

spec:

replicas: 1

config:

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch: {}

exporters:

debug:

verbosity: detailed # 增加调试信息,便于排查

otlp:

endpoint: "10.98.236.160:4317" #jaeger地址

tls:

insecure: true

prometheus:

endpoint: "0.0.0.0:8889" # Collector 暴露的 Prometheus 端点

send_timestamps: true # 包含时间戳以提高兼容性

service:

pipelines:

traces:

receivers: [otlp]

processors: [batch]

exporters: [debug, otlp]

metrics:

receivers: [otlp]

processors: [batch]

exporters: [debug, prometheus] # 导出到 Prometheus

logs:

receivers: [otlp]

processors: [batch]

exporters: [debug]

查看链路数据是否推送到jaeger

PS:因为tempo不支持告警所以部署jaeger主要是为了Error告警

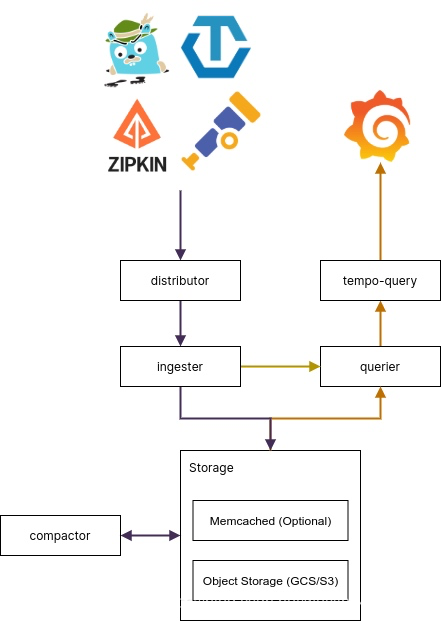

部署Tempo

Tempo 介绍

Grafana Tempo是一个开源、易于使用的大规模分布式跟踪后端。Tempo具有成本效益,仅需要对象存储即可运行,并且与Grafana,Prometheus和Loki深度集成,Tempo可以与任何开源跟踪协议一起使用,包括Jaeger、Zipkin和OpenTelemetry。它仅支持键/值查找,并且旨在与用于发现的日志和度量标准(示例性)协同工作。

部署 Tempo

推荐用Helm 安装,官方提供了tempo-distributed Helm chart 和 tempo Helm chart 两种部署模式,一般来说本地测试使用 tempo Helm chart,而生产环境可以使用 Tempo 的微服务部署方式 tempo-distributed。接下来以整体模式为例,具体可参考文档https://github.com/grafana/helm-charts/tree/main/charts/tempo

[root@devops-master OpenTelemetry]# helm repo add grafana https://grafana.github.io/helm-charts

[root@devops-master OpenTelemetry]# helm pull grafana/tempo --untar

[root@devops-master OpenTelemetry]# cd tempo

[root@devops-master OpenTelemetry]# ls

Chart.yaml README.md README.md.gotmpl templates values.yaml

[root@devops-master OpenTelemetry]# vim values.yaml

metricsGenerator: # tempo 开启metricsGenerator 功能

enabled: true

remoteWriteUrl: "http://prometheus-k8s.monitoring.svc:9090/api/v1/write" ### 远程推送prometheus地址

ingester: {}

querier: {}

queryFrontend: {}

retention: 48h #数据保留2天

overrides:

defaults:

metrics_generator:

processors:

- service-graphs

- span-metrics

storage:

trace:

backend: s3

s3:

bucket: tempo # minio得桶名称

endpoint: minio-service.minio.svc:9000 # minio地址

access_key: A5ouP9ax80e88PIr49GW # AK

secret_key: sm1Vocs462mhb35l3rU7czhbWiyXjkMkH9lVceq3 # SK

insecure: true # 跳过证书验证

#查看pod

[root@devops-master ~]# kubectl get pod -n opentelemetry

NAME READY STATUS RESTARTS AGE

center-collector-7bfdd46946-ff8bm 1/1 Running 0 22d

jaeger-957466498-br2dx 1/1 Running 47 (8m24s ago) 22d

tempo-0 1/1 Running 0 3d3h

[root@devops-master ~]#配置 Collector

tempo 服务的otlp 数据接收端口分别为4317(grpc)和4318(http),修改OpenTelemetryCollector 配置,将数据发送到 tempo 的 otlp 接收端口。

[root@devops-master OpenTelemetry]# cat center-collector.yaml

apiVersion: opentelemetry.io/v1beta1

kind: OpenTelemetryCollector

metadata:

name: center

namespace: opentelemetry

spec:

replicas: 1

config:

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch: {}

exporters:

debug:

verbosity: detailed # 用于调试日志

otlp:

endpoint: "192.168.0.89:32209" # tempo

tls:

insecure: true

otlp/jaeger:

endpoint: "http://192.168.0.89:31183" #jaeger

tls:

insecure: true

prometheus:

endpoint: "0.0.0.0:8889" # Prometheus 端点

send_timestamps: true # 包含时间戳

service:

telemetry:

logs:

level: "debug"

pipelines:

traces:

receivers: [otlp]

processors: [batch]

exporters: [debug, otlp, otlp/jaeger]

metrics:

receivers: [otlp]

processors: [batch]

exporters: [debug, prometheus]

logs:

receivers: [otlp]

processors: [batch]

exporters: [otlp]

部署Loki

oki 也分为整体式 、微服务式、可扩展式三种部署模式,具体可参考文档Helm chart components | Grafana Loki documentation,此处以可扩展式为例:loki 使用 minio 对象存储配置可参考文档:How To Deploy Grafana Loki and Save Data to MinIO

获取 chart包

[root@devops-master minio] # helm repo add grafana https://grafana.github.io/helm-charts

"grafana" has been added to your repositories

[root@devops-master minio]# helm pull grafana/loki --untar

[root@devops-master minio]# ls

charts Chart.yaml README.md requirements.lock requirements.yaml templates values.yaml修改配置文件

# vim values.yaml

loki:

# Configures the readiness probe for all of the Loki pods

readinessProbe:

httpGet:

path: /ready

port: http-metrics

initialDelaySeconds: 30

timeoutSeconds: 1

image:

# -- The Docker registry

registry: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io

# -- Docker image repository

repository: grafana/loki

# -- Overrides the image tag whose default is the chart's appVersion

tag: 3.5.1

commonConfig:

path_prefix: /var/loki

replication_factor: 1

compactor_address: '{{ include "loki.compactorAddress" . }}'

# -- Storage config. Providing this will automatically populate all necessary storage configs in the templated config.

# -- In case of using thanos storage, enable use_thanos_objstore and the configuration should be done inside the object_store section.

schemaConfig:

# -- a real Loki install requires a proper schemaConfig defined above this, however for testing or playing around

# you can enable useTestSchema

#useTestSchema: false

# testSchemaConfig:

configs:

- from: "2024-04-01"

store: tsdb

object_store: s3

schema: v13

index:

prefix: index_

period: 24h

#####

storage:

# Loki requires a bucket for chunks and the ruler. GEL requires a third bucket for the admin API.

# Please provide these values if you are using object storage.

bucketNames:

chunks: loki

ruler: loki

admin: loki

type: s3

s3:

s3: s3://A5ouP9ax80e88PIr49GW:sm1Vocs462mhb35l3rU7czhbWiyXjkMkH9lVceq3@minio-service.minio.svc:9000

endpoint: minio-service.minio.svc:9000

#region: null

secretAccessKey: sm1Vocs462mhb35l3rU7czhbWiyXjkMkH9lVceq3

accessKeyId: A5ouP9ax80e88PIr49GW

#signatureVersion: null

s3ForcePathStyle: true

minio:

enabled: false

replicas: 1

deploymentMode: SingleBinary<->SimpleScalable

singleBinary:

replicas: 1

persistence:

storageClass: nfs-storage

accessModes:

- ReadWriteOnce

size: 30Gi

# Zero out replica counts of other deployment modes

backend:

replicas: 1

podManagementPolicy: "Parallel"

persistence:

# -- Enable volume claims in pod spec

volumeClaimsEnabled: true

# -- Parameters used for the `data` volume when volumeClaimEnabled if false

dataVolumeParameters:

emptyDir: {}

# -- Enable StatefulSetAutoDeletePVC feature

enableStatefulSetAutoDeletePVC: true

# -- Size of persistent disk

size: 10Gi

# -- Storage class to be used.

# If defined, storageClassName: <storageClass>.

# If set to "-", storageClassName: "", which disables dynamic provisioning.

# If empty or set to null, no storageClassName spec is

# set, choosing the default provisioner (gp2 on AWS, standard on GKE, AWS, and OpenStack).

storageClass: nfs-storage

# -- Selector for persistent disk

selector: null

# -- Annotations for volume claim

annotations: {}

read:

replicas: 1

write:

replicas: 1

podManagementPolicy: "Parallel"

persistence:

# -- Enable volume claims in pod spec

volumeClaimsEnabled: true

# -- Parameters used for the `data` volume when volumeClaimEnabled if false

dataVolumeParameters:

emptyDir: {}

# -- Enable StatefulSetAutoDeletePVC feature

enableStatefulSetAutoDeletePVC: false

# -- Size of persistent disk

size: 10Gi

# -- Storage class to be used.

# If defined, storageClassName: <storageClass>.

# If set to "-", storageClassName: "", which disables dynamic provisioning.

# If empty or set to null, no storageClassName spec is

# set, choosing the default provisioner (gp2 on AWS, standard on GKE, AWS, and OpenStack).

storageClass: nfs-storage

# -- Selector for persistent disk

selector: null

# -- Annotations for volume claim

annotations: {}

ingester:

replicas: 0

querier:

replicas: 0

queryFrontend:

replicas: 0

queryScheduler:

replicas: 0

distributor:

replicas: 0

compactor:

replicas: 0

indexGateway:

replicas: 0

bloomCompactor:

replicas: 0

bloomGateway:

replicas: 0

lokiCanary:

image:

# -- The Docker registry

registry: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io

# -- Docker image repository

repository: grafana/loki-canary

# -- Overrides the image tag whose default is the chart's appVersion

tag: 3.5.0

memcached:

# -- Enable the built in memcached server provided by the chart

enabled: true

image:

# -- Memcached Docker image repository

repository: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/memcached

# -- Memcached Docker image tag

tag: 1.6.38-alpine

memcachedExporter:

# -- Whether memcached metrics should be exported

enabled: true

image:

repository: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/prom/memcached-exporter

tag: v0.15.2

pullPolicy: IfNotPresent

gateway:

image:

# -- The Docker registry for the gateway image

registry: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io

# -- The gateway image repository

repository: nginxinc/nginx-unprivileged

# -- The gateway image tag

tag: 1.27-alpine

sidecar:

image:

# -- The Docker registry and image for the k8s sidecar

repository: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/kiwigrid/k8s-sidecar

# -- Docker image ta

tag: 1.30.5

配置 Collector导出到Loki

[root@prod-master OpenTelemetry]# cat center-collector.yaml

apiVersion: opentelemetry.io/v1beta1

kind: OpenTelemetryCollector

metadata:

name: center

namespace: opentelemetry

spec:

replicas: 1

config:

receivers:

otlp: # 接收来自客户端(如应用程序)的 OTLP 数据

protocols:

grpc:

endpoint: 0.0.0.0:4317 # gRPC 协议监听地址和端口

http:

endpoint: 0.0.0.0:4318 # HTTP 协议监听地址和端口

processors:

batch: {} # 批处理处理器:用于聚合和优化发送数据,减少网络请求频率

exporters:

debug:

verbosity: detailed # 调试导出器:详细输出收集到的所有数据,通常用于测试环境

otlp:

endpoint: "192.168.0.89:32209" # 发送 traces 到 Tempo(分布式追踪系统)的地址

tls:

insecure: true # 关闭 TLS 验证(适用于测试或非生产环境)

otlphttp/loki:

endpoint: "http://192.168.0.89:30107/otlp" # Loki 的 OTLP HTTP 接口地址

tls:

insecure: true # 关闭 TLS 验证(明文 HTTP)

otlp/jaeger:

endpoint: "http://192.168.0.89:31183" # Jaeger 接收 OTLP traces 的地址

tls:

insecure: true # 不使用 HTTPS 验证

prometheus:

endpoint: "0.0.0.0:8889" # Prometheus 采集 metrics 的 HTTP 端口

send_timestamps: true # 包含时间戳,方便 Prometheus 正确存储时间序列数据

service:

telemetry:

logs:

level: "debug" # Collector 自身日志级别为 debug

pipelines:

traces:

receivers: [otlp] # 接收 trace 数据

processors: [batch] # 批处理优化

exporters: [debug, otlp, otlp/jaeger] # 同时导出到调试控制台、Tempo、Jaeger

metrics:

receivers: [otlp] # 接收 metrics 数据

processors: [batch] # 批处理优化

exporters: [debug, prometheus] # 同时导出到控制台和 Prometheus

logs:

receivers: [otlp] # 接收日志数据(如结构化日志)

processors: [batch] # 批处理日志,统一发送

exporters: [otlphttp/loki] # 导出日志到 Loki(用于日志聚合和可视化)

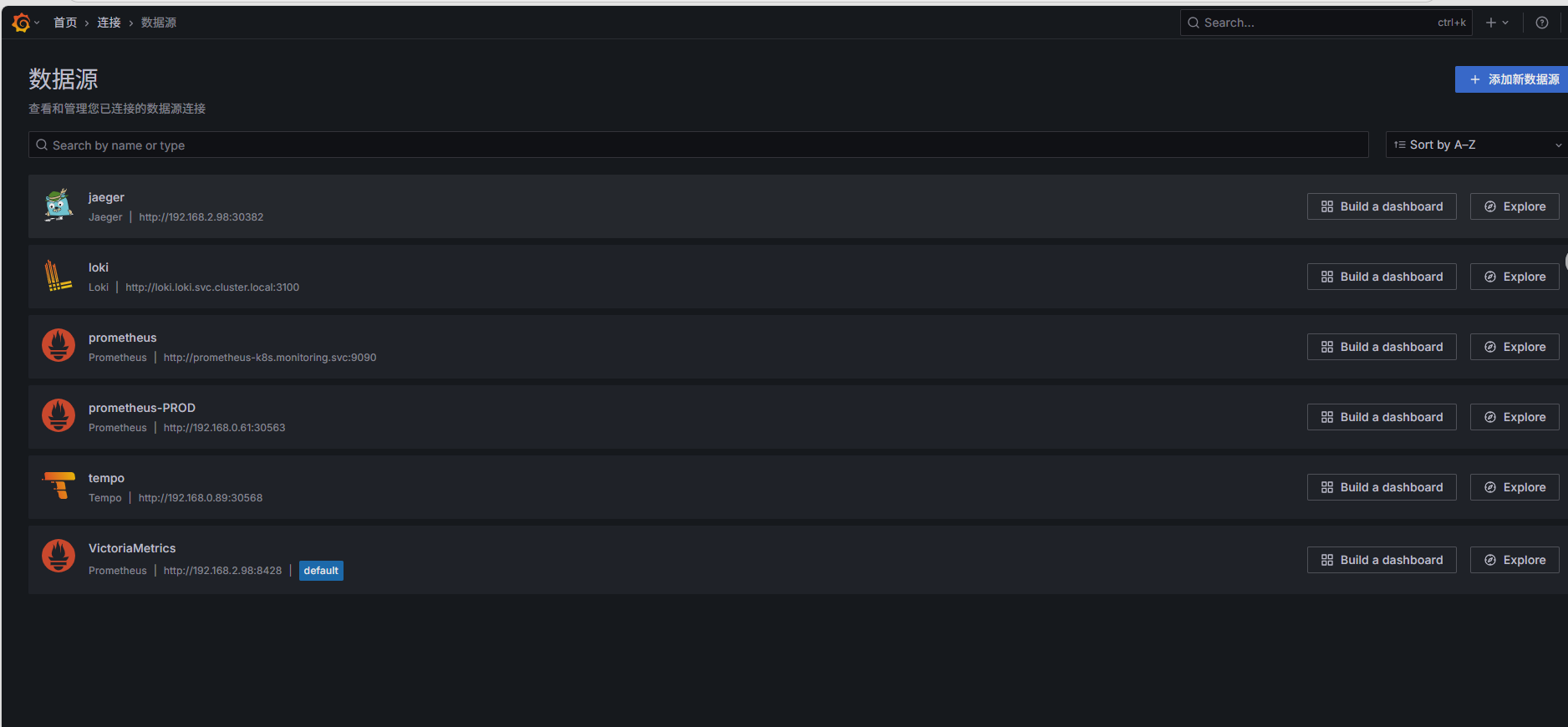

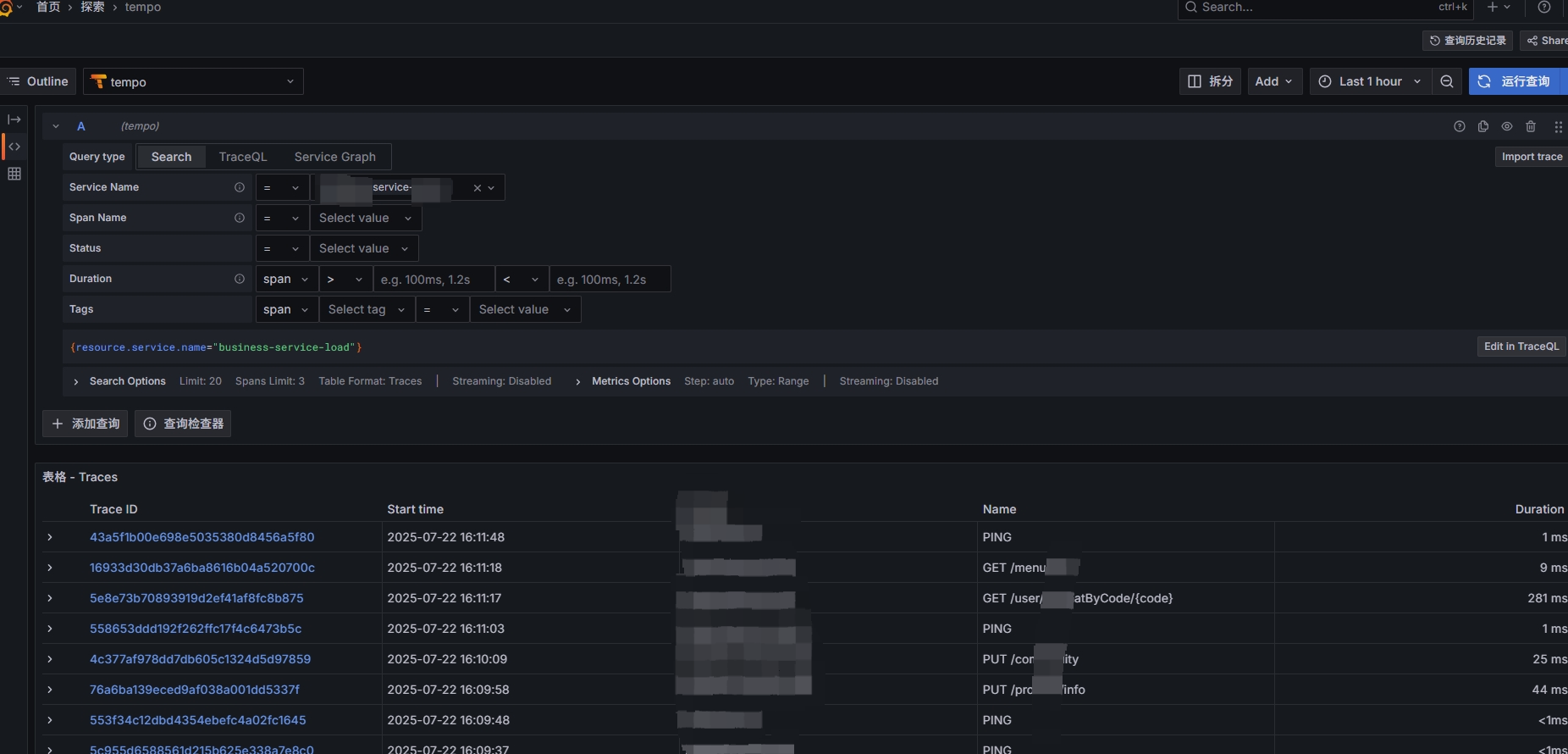

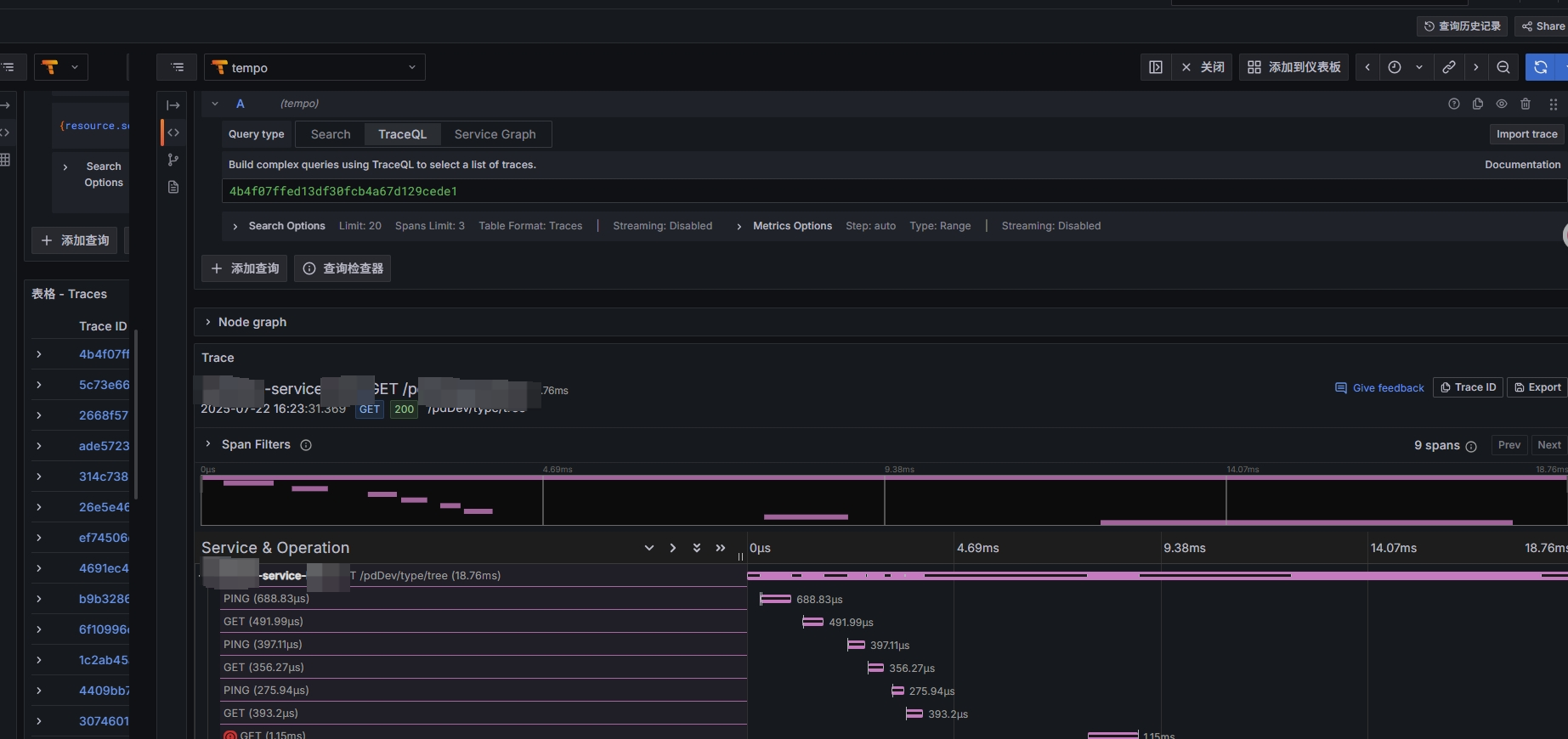

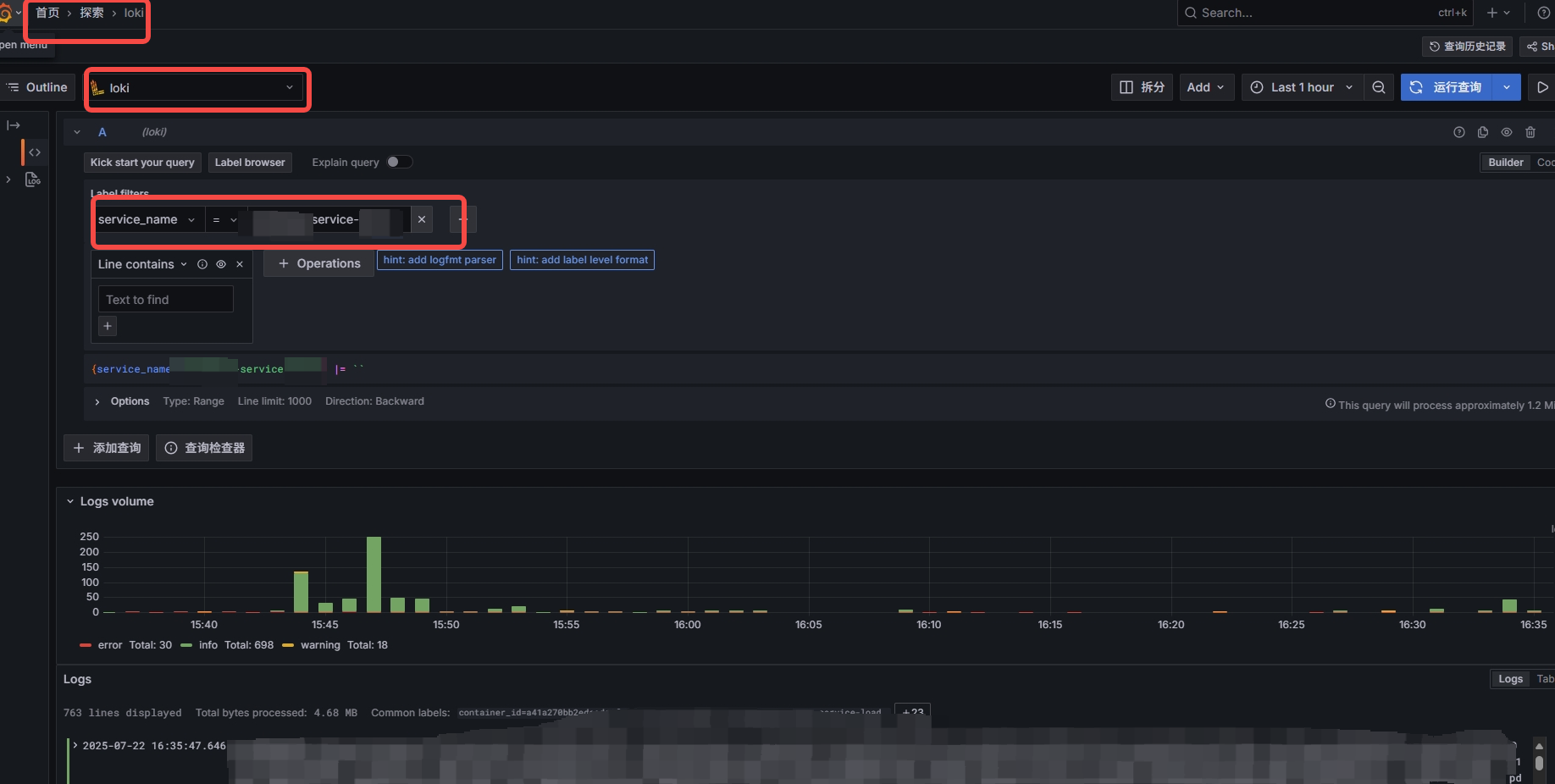

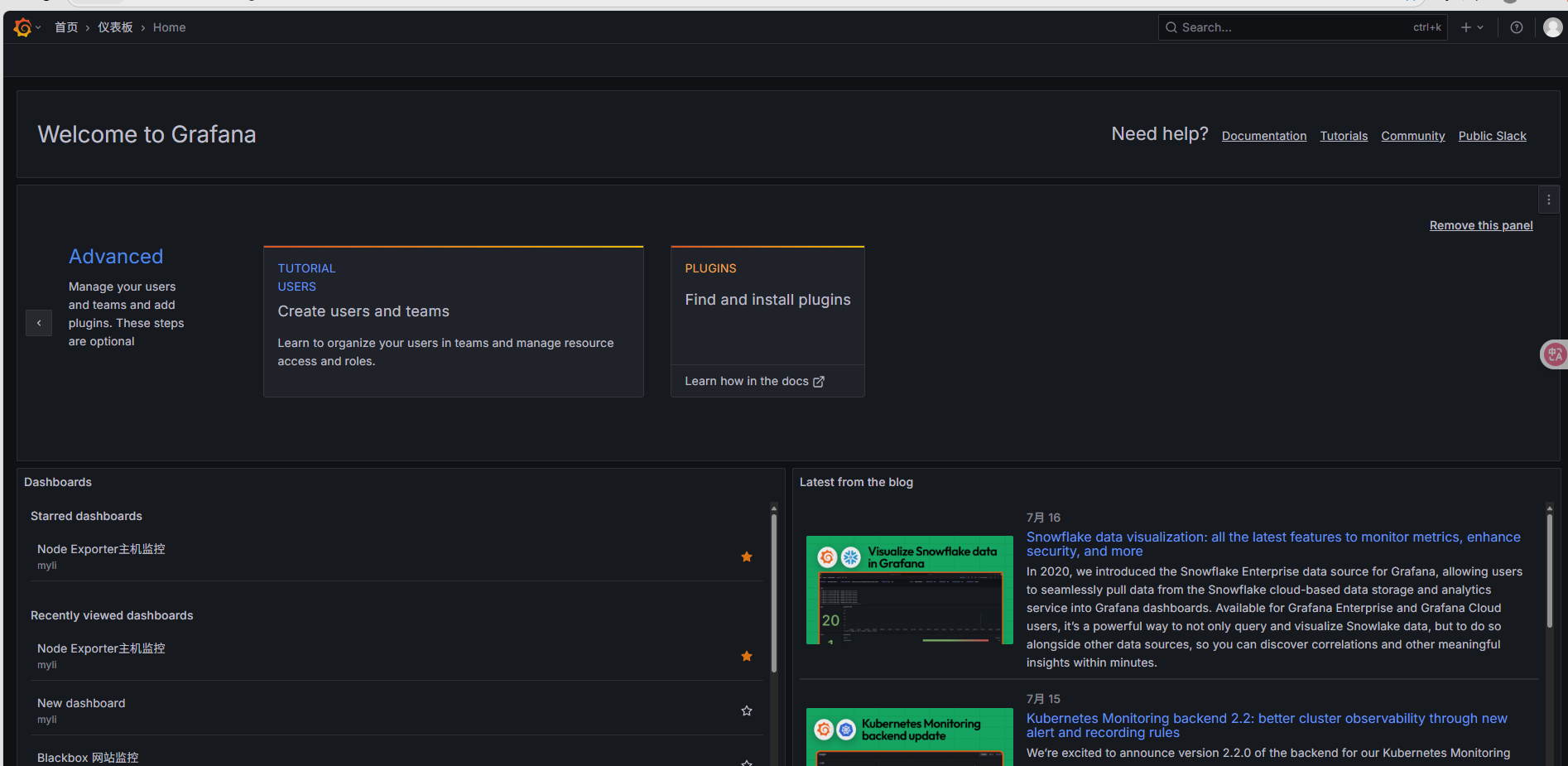

Grafana添加数据源(Loki、Tempo、Jaeger)

这里已经添加过,不在过多描述如何添加数据源

添加数据源时候即可查看相关接口、链路调用

查看otlp收集上传至loki得日志

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)